Archive for May, 2010

Gradual Refactoring

Posted by Petter Måhlén in Code Management on May 19, 2010

We’re right now in the middle of something that feels a little like an unplanned experiment in code management. Unplanned in the sense that I didn’t expect us to work the way we do, not so much in the sense that I think it is particularly risky. It started not quite a year ago with my colleague Mateusz suggesting that we should dedicate a fixed percentage of the story points in each sprint to what he called maintenance or technical stories. That soon crystallised into a backlog owned by me, where we schedule stories that are aimed at somehow improving our productivity as opposed to our product. So Paul, the ‘normal’ product owner, is in charge of the main backlog that deals with improving the products, and I am the owner of the maintenance backlog, where we improve the efficiency of how we work by improving processes, tools and removing technical debt. We’re aiming at spending about 15% of our time on technical backlog stories, and we more or less do.

Typical examples of stories that have gone onto our technical backlog are:

- A tool that allows QA to specify that outgoing service calls matching certain regular expressions should return mock data specified in a file rather actually calling the service. This makes us a lot more productive with regard to verifying site behaviour in certain hard-to-recreate and data-dependent cases.

- Improvements to our performance monitoring systems and tools that make it easier for us to figure out where we have performance problems when we do.

- Auditing and optimising the QA server allocation in order to speed up especially our automated test scripts.

- Various refactoring stories that clean up code where functional evolution has led to the original design no longer being suitable.

That has worked out pretty much as expected: we’ve gained benefits from the productivity improvements and we continue to spend 5-6 times more effort on money-making product improvements than on engineering driven platform-building.

The unexpected thing that has happened is that we’re heading towards a situation where we have different design generations that solve similar problems. As an example, the original pattern we used to create Spring MVC controllers has broken down, so we’ve come up with a new one that is better though not yet perfect. In order to have stories small enough to complete within one iteration, we’ve had to apply this pattern on a controller-by-controller basis – each refactoring has taken about 2 weeks of calendar time so far, except the first one which took about 4, so the effort isn’t trivial. There are nearly 40 controller implementations in our site code and about 95% of the incoming traffic is handled by 4 of those. We’re now at a stage where we’ve refactored three of those four controllers. Given that the other 35 or so controllers a) don’t serve a lot of traffic, so don’t have a lot of business value and b) don’t get changed a lot because the functions they provide aren’t ones that we need to innovate in, I don’t feel like refactoring all of them. In fact, the next refactoring story in the backlog is aimed at a different area in the site, where a repeated pattern has broken down in a similar way.

My initial gut reaction was that we should apply any new pattern for a commonly occurring situation across the board, then tackle the next similar situation. That keeps the code clean and makes it easy to find your way around. But the point of refactoring is that it must be an investment that you can recoup, and if we haven’t spent more than 4 hours working on a particular controller in the last year, what are the chances of ever recouping an investment of a man-week? We would probably have to keep using the same controller for at least 20 years, assuming the refactoring made us twice as productive, and that doesn’t seem likely to happen. Given that, it seems like the best option is to focus refactoring efforts where they give return on investment, which is those parts of the code that you do most of your work in.

I think we’re more or less permanently stuck with two different generations of controller patterns. But it will be interesting to see what will happen over the next year or two – can this super-pattern of having annual growth rings of standardised solution patterns handle more than two generations? I’m not sure, but I believe it can.

Immutability: a constraint that empowers

Posted by Petter Måhlén in Java, Software Development on May 4, 2010

In Effective Java (Second Edition, item 15), Josh Bloch mentions that he is of the opinion that Java programmers should have the default mindset that their objects should be immutable, and that mutability should be used only when strictly necessary. The reason for this post is it seems to me that most Java code I read doesn’t follow this advice, and I have come to the conclusion that immutability is an amazing concept. My theory is that one of the reasons why immutability isn’t as widely used as it should be is that as programmers or architects, we usually want to open up possibilities: a flexible architecture allows for future growth and changes and gives as much freedom as possible to solve coding problems in the most suitable way. Immutability seems to go against that desire for flexibility in that it takes an option away: if your architecture involves immutable objects, you’ve reduced your freedom because those objects can’t change any longer. How can that be good?

The two main motivations for using immutability according to Effective Java is that a) it is easier to share immutable objects between threads and b) immutable objects are fundamentally simple because they cannot change states once instantiated. The former seems to be the most commonly repeated argument in favour of using immutable objects – I think probably because it is far more concrete: make objects immutable and they can easily be shared by threads. The latter, that the immutable objects are fundamentally simple, is the more powerful (or at least more ubiquitous) reason in my opinion, but the argument is harder to make. After all, there’s nothing you can do with an immutable object that you cannot also do with a mutable one, so why constrain your freedom? I hope to illustrate a couple of ways that you both open up macro-level architectural options and improve your code on the micro level by removing your ability to modify things.

Macro Level: Distributed Systems

First, I would like to spend some time on the concurrency argument and look at it from a more general perspective than just Java. Let’s take something that is familiar to anybody who has worked on a distributed system: caching. A cache is a (more) local copy of something where the master copy is for some reason expensive to fetch. Caching is extremely powerful and it is used literally everywhere in computing. The fact that the item in the cache could be different to the master copy, due to either one of them having changed, leads to a lot of problems that need solving. So there are algorithms like read-through, refresh-ahead, write-through, write-behind, and so on, that deal with the problem that the data in your cache or caches may be outdated or in a ‘future state’ when compared to the master copy. These problems are well-studied and solved, but even so, having to deal with stale data in caches adds complexity to any system that has to deal with it. With immutable objects, these problems just vanish. If you have a copy of a certain object, it is guaranteed to be the current one, because the object never changes. That is extremely powerful in designing a distributed architecture.

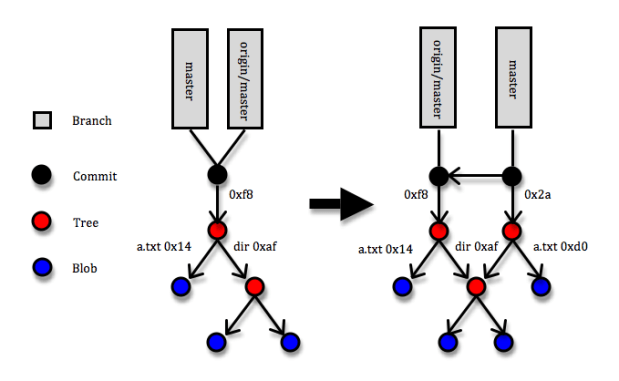

I wonder if I can write a blog post that doesn’t mention Git – apparently not this one at least :). Git is a marvellous example of a distributed architecture whose power comes to a large degree from reducing mutability. Most of the entities in Git are immutable: commits, trees and blobs and all of them are referenced via keys that are SHA-1 hashes of their contents. If a blob (a file) changes, a new entity in Git is created with a new hash code. If a tree (a directory – essentially a list of hash codes and names that identify trees or blobs that live underneath it) changes through a file being added, removed or modified, a new tree entity with new contents and a new hash code is created. And whenever some changes are committed to a Git repository, a commit entity (with a reference to the new top tree entity and some metadata about who and when made the commit, a pointer to the previous commit/s, etc) that describes the change set is created. Since the reference to each object is uniquely defined by its content, two identical objects that are created separately will always have the same key. (If you want to learn more about Git internals, here is a great though not free explanation.) So what makes that brilliant? The answer is that the immutability of objects means your copy is guaranteed to be correct and SHA-1 object references means that identical objects are guaranteed to have identical references and speeds up identity checks – since collisions are extremely unlikely, identical references is the same as objects being identical. Suppose somebody makes a commit that includes a blob (file) with hash code 14 and you fetch the commit to your local Git repository, and suppose further that a blob with hash code 14 is already in your repository. When Git fetches the commit, it’ll come to the tree that references blob 14 and simply note that “I’ve already got blob 14, so no need to fetch it”.

The diagram above shows a simplified picture of a Git repository where a commit is added. The root of the repository is a folder with a file called ‘a.txt’ and a directory called ‘dir’. Under the directory there’s a couple more files. In the commit, the a.txt file is changed. This means that three new entities are created: the new version of a.txt (hash code 0xd0), the new version of the root directory (hash code 0x2a), and the commit itself. Note that the new root directory still points to the old version of the ‘dir’ directory and that none of the objects that describe the previous version of the root directory are affect in any way.

That Git uses immutable objects in combination with references derivable from the content of objects is what opens up possibilities for being very efficient in terms of networking (only transferring necessary objects), local space storage (storing identical objects only once), comparisons and merges (when you reach a point where two trees point to the same object, you stop), etc., etc. Note that this usage of immutable objects is quite different from the one you read about in books like Effective Java and Java Concurrency in Practice – in those books, you can easily get the impression that immutability is something that requires taking some pretty special measures with regard to how you specify your classes, inheritance and constructors. I’d say that is only a part of the truth: if you do take those special measures in your Java code, you’ll get the benefit of some specific guarantees made by the Java Memory Model that will ensure you can safely share your immutable objects between threads inside a JVM. But even without solid guarantees about immutability like the JVM can provide, using immutability can provide huge architectural gains. So while Git doesn’t have any means of enforcing strict immutability – I can just edit the contents of something stored under .git/objects, with probable chaos ensuing – utilising it opens up for a very powerful architecture.

When you’re writing multi-threaded Java programs, use the strong guarantees you can get from defining your Java classes correctly, but don’t be afraid to make immutability a core architectural idea even if there is no way to actually formally enforce it.

Micro Level: Building Blocks

I guess the clearest point I can make about immutability, like most of the others who have written about it, is still one about concurrent execution. But I am a believer also in the second point about the simplicity that immutability gives you even if your system isn’t distributed. For me, the essence of that point is that you can disregard any immutable object as a source of weirdness when you’re trying to figure out how the code in front of you is working. Once you have confirmed to yourself that an immutable object is being instantiated correctly, it is guaranteed to remain correct for the rest of the logical flow you’re looking at. You can filter out that object and focus your valuable brainpower on other things. As a former chess player, I see a strong analogy: once you’ve played a bit, you stop seeing three pawns, a rook and a king, you see a king-side castle formation. This is a single unit that you know how it interacts with the rest of the pieces on the board. This allows you to simplify your mental model of the board, reducing clutter and giving you a clearer picture of the essentials. A mutable object resists simplification, so you’ll constantly need to be alert to something that might change it and affect the logic.

you’ve played a bit, you stop seeing three pawns, a rook and a king, you see a king-side castle formation. This is a single unit that you know how it interacts with the rest of the pieces on the board. This allows you to simplify your mental model of the board, reducing clutter and giving you a clearer picture of the essentials. A mutable object resists simplification, so you’ll constantly need to be alert to something that might change it and affect the logic.

On top of the simplicity that the classes themselves gain, by making them immutable, you can communicate your intent as a designer clearly: “this object is read-only, and if you believe you want to change it in order to make some modification to the functionality of this code, you’ve either not yet understood how the design fits together or requirements have changed to such an extent that the design doesn’t support them any longer”. Immutable objects make for extremely solid and easily understood building blocks and give the readers clear signals about their intended usage.

When immutability doesn’t cut the mustard

There are very few things that are all beneficial, and this is of course the case with immutability. So what are the problems you run into with immutability? Well, first of all, no system is interesting without mutable state, so you’ll need at least some objects that are mutable – in Git, branches are a prime example of mutable object, and hence also the one source of conflicts between different repositories. A branch is simply a pointer to the latest commit on that branch, and when you create new commits on a branch, the pointer is updated. In the diagram above, that is reflected by the ‘master’ label having moved to point to the latest commit, but the local copy of where ‘origin/master’ is pointing is unchanged and will remain so until the next push or fetch happens. In the mean time, the actual branch on the origin repository may have changed, so Git needs to handle possible conflicts there. So the branches suffer from the normal cache-related staleness and concurrent update problems, but thanks to most entities being immutable, these problems are confined to a small section of the system.

Another thing you’ll need, which is provided for free by a JVM but not in systems in general, is garbage collection. You’ll keep creating new objects instead of changing the existing ones, and for most kinds of systems, that means you’ll want to get rid of the outdated stuff. Most of the times, that stuff will simply sit on some disk somewhere, and disk space is cheap which means you don’t need to get super-sophisticated with your garbage collection algorithms. In general, the memory allocation and garbage collection overhead that you incur from creating new objects instead of updating existing ones is much less of a problem than it seems at first glance. I’ve personally run into one case where it was clearly impossible to make objects immutable: in Ardor3D, an open-source Java 3D engine, where the most commonly used class is Vector3, a vector of three doubles. This feels like it is a great candidate for being immutable since it is a typical small, simple value object. However, the main task of a 3D engine is to update many thousands of positions of things at least 50 times per second, so in this case, immutability would be completely impossible. Our solution there was to signal ‘immutability intent’ by having the Vector3 class (and some similar classes) implement a ReadOnlyVector3 interface and use that interface when some class needed a vector but wasn’t planning on modifying it.

There’s a lot more solid but semi-concrete advice about why immutability is useful outside of the context of sharing state between threads in Effective Java, so if you’re not convinced about it yet, I would urge you to re-read item 15. Or maybe the whole book, since everything Josh Bloch says is fantastic. To me, the use of immutability in Git shows that removing some aspects of freedom can unlock opportunities in other parts of the system. I also think that you should do everything you can do that will make your code easier for the reader to understand. Reducing mutability can have a game-changing impact on the macro level of your system, and it is guaranteed to help remove mental clutter and thereby improve your code on the micro level.

Code sharing: Use Maven

Posted by Petter Måhlén in Code Management on May 1, 2010

Maven’s slow progress towards becoming the most accepted Java build tool seems to continue, although a lot of people are still annoyed enough with its numerous warts to prefer Ant or something else. My personal opinion is that Maven is the best build solution for Java programs that is out there, and as somebody said – I’ve been trying to find the quote, but I can’t seem to locate it – when an Ant build is complicated, you blame yourself for writing a bad build.xml, but when it is hard to get Maven to behave, you blame Maven. With Ant, you program it, so any problems are clearly due to a poorly structured program. With Maven you don’t tell it how to do things, you try to tell it what should be done, so any problems feel like the fault of the tool. The thing is, though, that Maven tries to take much more responsibility for some of the issues that lead to complex build scripts than something like Ant does.

I’ve certainly spent a lot of time cursing poorly written build scripts for Ant and other tools, and I’ve also spent a lot of time cursing Maven when it doesn’t do what I want it to. But the latter time is decreasing as Maven keeps improving as a build tool. There’s been lots of attempts to create other tools that are supposed to make builds easier than Maven, but from what I have seen, nothing has yet really succeeded to provide a clearly better option (I’ve looked at Buildr and Raven, for instance). I think the truth is simply that the build process for a large system is a complex problem to solve, so one cannot expect it to be free of hassles. Maven is the best tool out there for the moment, but will surely be replaced by something better at some point.

So, using Maven isn’t going to be problem-free. But it can help with a lot of things, particularly in the context of sharing code between multiple teams. The obvious thing it helps with is the single benefit that most people agree that Maven has – its way of managing dependencies and the massive repository infrastructure and dependency database that is just available out there. On top of that, building Maven projects in Hudson is dead easy, and there’s a whole slew of really nice tools that come with Maven plugins that you can use that enable you to get all kinds of reports and metadata about your code. My current favourite is Sonar, which is great if you want to keep track of how your code base evolves from some kind of aggregated perspective.

Here are some things you’ll want to do if you decide to use Maven for the various projects that make up your system:

- Use Nexus as an internal repository for build artifacts.

- Use the Maven Release plugin to create releases of internal artifacts.

- Create a shared POM for the whole code base where you can define shared settings for your builds.

The word ‘repository’ is a little overloaded in Maven, so it may be confusing. Here’s a diagram that explains the concept and shows some of the things that a repository manager like Nexus can help you with:

The setup includes a Git server (because you use Git) for source control, a Hudson server (or set of) that does continuous integration, a Nexus-managed artifact repository and a developer machine. The Nexus server has three repositories in it: internal releases, internal snapshots and a cache of external repositories. The latter is only there as a performance improvement. The other two are the way that you distribute Maven artifacts within your organisation. When a Maven build runs on the Hudson or developer machines, Maven will use artifacts from the local repository on the machine – by default located in a folder under the user’s home directory. If a released version of an artifact isn’t present in the local repository, it will be downloaded from Nexus, and snapshot versions will periodically be refreshed, even if present locally. In the example setup, new snapshots are typically deployed to the Nexus repository by the Hudson server, and released versions are typically deployed by the developer producing the release. Note that both Hudson and developers are likely to install snapshots to the local repository.

The setup includes a Git server (because you use Git) for source control, a Hudson server (or set of) that does continuous integration, a Nexus-managed artifact repository and a developer machine. The Nexus server has three repositories in it: internal releases, internal snapshots and a cache of external repositories. The latter is only there as a performance improvement. The other two are the way that you distribute Maven artifacts within your organisation. When a Maven build runs on the Hudson or developer machines, Maven will use artifacts from the local repository on the machine – by default located in a folder under the user’s home directory. If a released version of an artifact isn’t present in the local repository, it will be downloaded from Nexus, and snapshot versions will periodically be refreshed, even if present locally. In the example setup, new snapshots are typically deployed to the Nexus repository by the Hudson server, and released versions are typically deployed by the developer producing the release. Note that both Hudson and developers are likely to install snapshots to the local repository.

I’ve tried a couple of other repository managers (Archiva, Artifactory and Maven-Proxy), but Nexus has been by a pretty wide margin the best – robust, easy to use and easy to understand. It’s been a year or two since I looked at the other ones, so they may have improved since.

Having an internal repository opens up for code sharing by providing a uniform mechanism for distributing updated versions of internal libraries using the standard Maven deploy command. Maven has two types of artifact versions: releases and snapshots. Releases are assumed to be immutable and snapshots mutable, so updating a snapshot in the internal repository will affect any build that downloads the updated snapshot, whereas releases are supposed to be deployed to the internal repository once only – any subsequent deployments should deploy something that is identical. Snapshots are tricky, especially when branching. If you create two branches of the same library and fail to ensure that the two branches have different snapshot versions, the two branches will interfere,

There is interference between the two branches because they both create updates to the same artifact in the Maven repositories. Depending on the ordering of these updates, builds may succeed or fail seemingly at random. At Shopzilla, we typically solve this problem in two ways: for some shared projects, where we have long-lived/permanent team-specific branches, the team name is included in the version number of the artifact, and for short-lived user story branches, the story ID is included in the version number. So if I need to create a branch off of version 2.3-SNAPSHOT for story S3765, I’ll typically label the branch S3765 and change the version of the Maven artifact to 2.3-S3765-SNAPSHOT. The Maven release plugin has a command that simplifies branching, but for whatever reason, I never seem to use it. Either way, being careful about managing branches and Maven versions is necessary.

A situation where I do use the maven release plugin a lot is when making releases of shared libraries. I advocate a workflow where you make a new release of your top-level project every time you make a live update, and because you want to make live updates frequently and you use scrum, that means a new Maven release with every iteration. To make a Maven release of a project, you have to eliminate all snapshot dependencies – this is a necessary requirement for immutability – so releasing the top level project means make release versions of all its updated dependencies. Doing this frequently reduces the risk of interference between teams by shortening the ‘checkout, modify, checkin’ cycle.

See the pom file example below for some hands-on pom.xml settings that are needed to enable using the release plugin.

The final tip for code sharing using Maven that I wanted to give is to use a shared parent POM that contains settings that should be shared between projects. The main reason is of course to reduce code duplication – any build file is code, of course, and Maven build files are not as easy to understand as one would like, so simplifying them is very valuable. Here’s some stuff that I think should go into a shared pom.xml file:

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.mycompany</groupId>

<artifactId>shared-pom</artifactId>

<name>Company Shared pom</name>

<version>1.0-SNAPSHOT</version>

<packaging>pom</packaging>

<!--

One of the things that is necessary in order to be able to use the

release plugin is to specify the scm/developerConnection element.

I usually also specify the plain connection, although

I think that is only used for generating project

documentation, a Maven feature I don't find particularly useful

personally.

A section like this needs to be present in every project for which

you want to be able to use the release plugin, with the project-

specific Git URL.

-->

<scm>

<connection>scm:git:git://GITHOST/GITPROJECT</connection>

<developerConnection>scm:git:git://GITHOST/GITPROJECT</developerConnection>

</scm>

<build>

<!--

Use the plugins section to define Maven plugin configurations that

you want to share between all projects.

-->

<plugins>

<!--

Compiler settings that are typically going to be identical in all

projects. With a name like Måhlén, you get particularly sensitive

to using the only useful character encoding there is.. ;)

-->

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>1.6</source>

<target>1.6</target>

<encoding>UTF-8</encoding>

</configuration>

</plugin>

<!--

Tell Maven to create a source bundle artifact during the package

phase. This is extremely useful when sharing code, as the act of

sharing means you'll want to create a relatively large number of

smallish artifacts, so creating IDE projects that refer directly

to the source code is unmanageable. But the Maven integration of

a good IDE will fetch the Maven source bundle if available, so if

you navigate to a class that is included via Maven from your

top-level project, you'll still see the source version - and even

the right source version, because you'll get what corresponds

to the binary that has been linked.

-->

<plugin>

<artifactId>maven-source-plugin</artifactId>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>jar</goal>

</goals>

</execution>

</executions>

</plugin>

<!--

Ensure that a javadoc jar is being generated and deployed. This

is useful for similar reasons as source bundle generation,

although to a lesser degree in my opinion. Javadoc is great, but

the source is always up to date.

-->

<plugin>

<artifactId>maven-javadoc-plugin</artifactId>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>jar</goal>

</goals>

</execution>

</executions>

</plugin>

<!--

The below configuration information was necessary to ensure that

you can use the maven release plugin with Git as a version control

system. The exact version numbers that you want to use are likely

to have changed since then, and it may even be that Git support is

more closely integrated nowadays, so less explicit configuration

is needed - I haven't tested that since maybe March 2009.

-->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-release-plugin</artifactId>

<dependencies>

<dependency>

<groupId>org.apache.maven.scm</groupId>

<artifactId>maven-scm-provider-gitexe</artifactId>

<version>1.1</version>

</dependency>

<dependency>

<groupId>org.codehaus.plexus</groupId>

<artifactId>plexus-utils</artifactId>

<version>1.5.7</version>

</dependency>

</dependencies>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-scm-plugin</artifactId>

<version>1.1</version>

<dependencies>

<dependency>

<groupId>org.apache.maven.scm</groupId>

<artifactId>maven-scm-provider-gitexe</artifactId>

<version>1.1</version>

</dependency>

<dependency>

<groupId>org.codehaus.plexus</groupId>

<artifactId>plexus-utils</artifactId>

<version>1.5.7</version>

</dependency>

</dependencies>

</plugin>

</plugins>

</build>

<!--

Configuration of internal repositories so that the sub-projects

know where to download internally created artifacts from. Note

that due to a bootstrapping issue, this configuration needs to

be duplicated in individual projects. This file, the shared POM,

is available from the Nexus repo, but if the project POM doesn't

contain the repo config, the project build won't know where to

download the shared POM.

-->

<repositories>

<!-- internal Nexus repository for released artifacts -->

<repository>

<id>internal-releases</id>

<url>http://NEXUSHOST/nexus/content/repositories/internal-releases</url>

<releases><enabled>true</enabled></releases>

<snapshots><enabled>false</enabled></snapshots>

</repository>

<!-- internal Nexus repository for SNAPSHOT artifacts -->

<repository>

<id>internal-snapshots</id>

<url>http://NEXUSHOST/nexus/content/repositories/internal-snapshots</url>

<releases><enabled>false</enabled></releases>

<snapshots><enabled>true</enabled></snapshots>

</repository>

<!--

Nexus repository cache for third party repositories such as

ibiblio. This is not necessary, but is likely to be a

performance improvement for your builds.

-->

<repository>

<id>3rd party</id>

<url>http://NEXUSHOST/nexus/content/repositories/thirdparty/</url>

<releases><enabled>true</enabled></releases>

<snapshots><enabled>false</enabled></snapshots>

</repository>

</repositories>

<distributionManagement>

<!-- Defines where to deploy released artifacts to -->

<repository>

<id>internal-repository-releases</id>

<name>Internal release repository</name>

<url>URL TO NEXUS RELEASES REPOSITORY</url>

</repository>

<!-- Defines where to deploy artifact snapshot to -->

<snapshotRepository>

<id>internal-repository-snapshot</id>

<name>Internal snapshot repository</name>

<url>URL TO NEXUS SNAPSHOTS REPOSITORY</url>

</snapshotRepository>

</distributionManagement>

</project>

The less pleasant part of using Maven is that you’ll need to learn more about Maven’s internals than you’d probably like, and you’ll most likely stop trying to fix your builds not when you’ve understood the problem and solved it in the way you know is correct, but when you’ve arrived at a configuration that works through trial and error (as you can see from my comments in the example pom.xml above). The benefits you’ll get in terms of simplifying the management of build artifacts across teams and actually also simplifying the builds themselves outweigh the costs of the occasional hiccup, though. A typical top-level project at Shopzilla links in around 70 internal artifacts through various transitive dependencies – managing that number of dependencies is not easy unless you have a good tool to support you, and dependency management is where Maven shines.