Posts Tagged Code Concepts

Composition vs Inheritance

Posted by Petter Måhlén in Java on August 20, 2010

One of the questions I often ask when interviewing candidate programmers is “what is the difference between inheritance and composition”. Some people don’t know, and they’re obviously out right then and there. Most people explain that “inheritance represents an is-a relationship and composition a has-a”, which is correct but insufficient. Very few people have a good answer to the question that I’m really asking: what impact does the choice of one or the other have on your software design? The last few years it seems there has been a trend towards feeling that inheritance is over-used and composition to a corresponding degree under-used. I agree, and will try to show some concrete reasons why that is the case. (As a side note, the main trigger for this blog post is that about a year ago, I introduced a class hierarchy as a part of a refactoring story, and it was immediately obvious that it was an awkward solution. However, it (and the other changes) represented a big improvement over what went before, and there wasn’t time to fix it back then. It’s bugged me ever since, and thanks to our gradual refactoring, we’re getting closer to the time when we can put something better in place, so I started thinking about it again.)

One guy I interviewed the other day had a pretty good attempt at describing when one should choose one or the other: it depends on which is closest to the real world situation that you’re trying to model. That has the ring of truth to it, but I’m not sure it is enough. As an example, take the classically hierarchical model of the animal kingdom: Humans and Chimpanzees are both Primates, and, skipping a bunch of steps, they are Animals just like Kangaroos and Tigers. This is clearly a set of is-a relationships, so we could model it like so:

public class Animal {}

public class Primate extends Animal {}

public class Human extends Primate {}

public class Chimpanzee extends Primate {}

public class Kangaroo extends Animal {}

public class Tiger extends Animal {}

Now, let’s add some information about their various body types and how they walk. Both Humans and Chimpanzees have two arms and two legs, and Tigers have four legs. So we could add two legs and two arms at the Primate level, and four legs at the Tiger level. But then it turns out that Kangaroos also have two legs and two arms, so this solution doesn’t work without duplication. Perhaps a more general concept involving limbs is needed at the animal level? There would still be duplication of the ‘legs + arms’ classification in both Kangaroo and Primate, not to mention what would happen if we introduce a RattleSnake into our Eden (or an EarthWorm if you prefer an animal that has never had limbs at any stage of its evolutionary history).

A similar problem is shown by introducing a walk() method. Humans do a bipedal walk, Chimpanzees and Tigers walk on all fours, and Kangaroos hop using their rear feet and tail. Where do we locate the logic for movement? All the animals in our sample hierarchy can walk on all fours (although humans tend to do so only when young or inebriated), so having a quadrupedWalk() method at the Animal level that is called by the walk() methods of Tiger and Chimpanzee makes sense. At least until we add a Chicken or Halibut, because they will now have access to a type of locomotion they shouldn’t.

The root problem here is of course that the model is bad even though it seems like a natural one. If you think about it, body types and locomotion are not defined by the various creatures’ positions in the taxonomy – there are both mammals and fish that swim, and both fish, lizards and snakes can be legless. I think it is natural for us to think in terms of templates and inherited properties from a more general class (Douglas Hofstadter said something very similar, I think in Gödel, Escher, Bach). If that kind of thinking is a human tendency, that could explain why it seems so common to initially think that a hierarchical model is the best one. It is often a mistake to assume that the presence of an is-a relationship in the real world means that inheritance is right for your object model.

But even if, unlike the example above, a hierarchical model is a suitable one to describe a certain real-world scenario, there are issues. A big one, in my opinion, is that the classes that make up a hierarchical model are very tightly coupled. You cannot instantiate a class without also instantiating all of its superclasses. This makes them harder to test – you can never ‘mock’ a superclass, so each test of a leaf in the hierarchy requires you to do all the setup for all the classes above. In the example above, you might have to set up the limbs of your RattleSnake and the data needed for the quadrupedWalk() method of the Halibut before you could test them.

With inheritance, the smallest testable unit is larger than it is when composition is used and you increase code duplication in your tests at least. In this way, inheritance is similar to use of static methods – it removes the option to inject different behaviour when testing. The more code you try to reuse from the superclass/es, the more redundant test code you will typically get, and all that redundancy will slow you down when you modify the code in the future. Or, worse, it will make you not test your code at all because it’s such a pain.

There is an alternative model, where each of the beings above have-a BodyType and possibly also have-a Locomotion that knows how to move that body. Probably, each of the animals could be differently configured instances of the same Animal class.

public interface BodyType {}

public TwoArmsTwoLegs implements BodyType {}

public FourLegs implements BodyType {}

public interface Locomotion<B extends BodyType> {

void walk(B body);

}

public class BipedWalk implements Locomotion<TwoArmsTwoLegs> {

public void walk(TwoArmsTwoLegs body) {}

}

public class Slither implements Locomotion<NoLimbs> {

public void walk(NoLimbs body) {}

}

public class Animal {

BodyType body;

Locomotion locomotion;

}

Animal human = new Animal(new TwoArmsTwoLegs(), new BipedWalk());

This way, you can very easily test the bodies, the locomotions, and the animals in isolation of each other. You may or may not want to do some automated functional testing of the way that you wired up your Human to ensure that it behaves like you want it to. I think composition is almost universally preferable to inheritance for code reuse – I’ve tried to think of cases where the only natural model is a hierarchical one and you can’t use composition instead, but it seems to be beyond me.

In addition to the arguments here, there’s the well-known fact that inheritance breaks encapsulation by exposing subclasses to implementation details in the superclass. This makes the use of subclassing as a means to integrate with a library a fragile solution, or at least one that limits the future evolution of the library.

So, there it is – that summarises what I think are the differences between composition and inheritance, and why one should tend to prefer composition over inheritance. So, if you’re reading this because you googled me before an interview, this is one answer you should get right!

Immutability: a constraint that empowers

Posted by Petter Måhlén in Java, Software Development on May 4, 2010

In Effective Java (Second Edition, item 15), Josh Bloch mentions that he is of the opinion that Java programmers should have the default mindset that their objects should be immutable, and that mutability should be used only when strictly necessary. The reason for this post is it seems to me that most Java code I read doesn’t follow this advice, and I have come to the conclusion that immutability is an amazing concept. My theory is that one of the reasons why immutability isn’t as widely used as it should be is that as programmers or architects, we usually want to open up possibilities: a flexible architecture allows for future growth and changes and gives as much freedom as possible to solve coding problems in the most suitable way. Immutability seems to go against that desire for flexibility in that it takes an option away: if your architecture involves immutable objects, you’ve reduced your freedom because those objects can’t change any longer. How can that be good?

The two main motivations for using immutability according to Effective Java is that a) it is easier to share immutable objects between threads and b) immutable objects are fundamentally simple because they cannot change states once instantiated. The former seems to be the most commonly repeated argument in favour of using immutable objects – I think probably because it is far more concrete: make objects immutable and they can easily be shared by threads. The latter, that the immutable objects are fundamentally simple, is the more powerful (or at least more ubiquitous) reason in my opinion, but the argument is harder to make. After all, there’s nothing you can do with an immutable object that you cannot also do with a mutable one, so why constrain your freedom? I hope to illustrate a couple of ways that you both open up macro-level architectural options and improve your code on the micro level by removing your ability to modify things.

Macro Level: Distributed Systems

First, I would like to spend some time on the concurrency argument and look at it from a more general perspective than just Java. Let’s take something that is familiar to anybody who has worked on a distributed system: caching. A cache is a (more) local copy of something where the master copy is for some reason expensive to fetch. Caching is extremely powerful and it is used literally everywhere in computing. The fact that the item in the cache could be different to the master copy, due to either one of them having changed, leads to a lot of problems that need solving. So there are algorithms like read-through, refresh-ahead, write-through, write-behind, and so on, that deal with the problem that the data in your cache or caches may be outdated or in a ‘future state’ when compared to the master copy. These problems are well-studied and solved, but even so, having to deal with stale data in caches adds complexity to any system that has to deal with it. With immutable objects, these problems just vanish. If you have a copy of a certain object, it is guaranteed to be the current one, because the object never changes. That is extremely powerful in designing a distributed architecture.

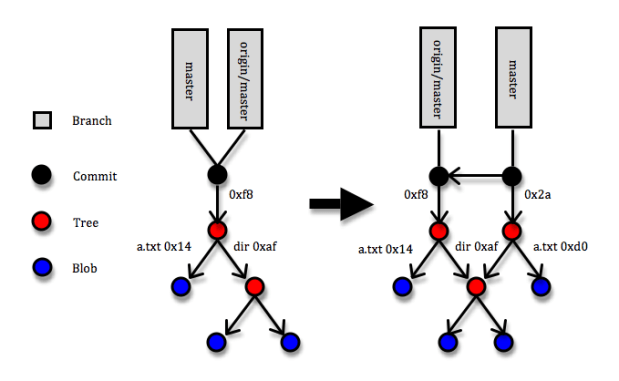

I wonder if I can write a blog post that doesn’t mention Git – apparently not this one at least :). Git is a marvellous example of a distributed architecture whose power comes to a large degree from reducing mutability. Most of the entities in Git are immutable: commits, trees and blobs and all of them are referenced via keys that are SHA-1 hashes of their contents. If a blob (a file) changes, a new entity in Git is created with a new hash code. If a tree (a directory – essentially a list of hash codes and names that identify trees or blobs that live underneath it) changes through a file being added, removed or modified, a new tree entity with new contents and a new hash code is created. And whenever some changes are committed to a Git repository, a commit entity (with a reference to the new top tree entity and some metadata about who and when made the commit, a pointer to the previous commit/s, etc) that describes the change set is created. Since the reference to each object is uniquely defined by its content, two identical objects that are created separately will always have the same key. (If you want to learn more about Git internals, here is a great though not free explanation.) So what makes that brilliant? The answer is that the immutability of objects means your copy is guaranteed to be correct and SHA-1 object references means that identical objects are guaranteed to have identical references and speeds up identity checks – since collisions are extremely unlikely, identical references is the same as objects being identical. Suppose somebody makes a commit that includes a blob (file) with hash code 14 and you fetch the commit to your local Git repository, and suppose further that a blob with hash code 14 is already in your repository. When Git fetches the commit, it’ll come to the tree that references blob 14 and simply note that “I’ve already got blob 14, so no need to fetch it”.

The diagram above shows a simplified picture of a Git repository where a commit is added. The root of the repository is a folder with a file called ‘a.txt’ and a directory called ‘dir’. Under the directory there’s a couple more files. In the commit, the a.txt file is changed. This means that three new entities are created: the new version of a.txt (hash code 0xd0), the new version of the root directory (hash code 0x2a), and the commit itself. Note that the new root directory still points to the old version of the ‘dir’ directory and that none of the objects that describe the previous version of the root directory are affect in any way.

That Git uses immutable objects in combination with references derivable from the content of objects is what opens up possibilities for being very efficient in terms of networking (only transferring necessary objects), local space storage (storing identical objects only once), comparisons and merges (when you reach a point where two trees point to the same object, you stop), etc., etc. Note that this usage of immutable objects is quite different from the one you read about in books like Effective Java and Java Concurrency in Practice – in those books, you can easily get the impression that immutability is something that requires taking some pretty special measures with regard to how you specify your classes, inheritance and constructors. I’d say that is only a part of the truth: if you do take those special measures in your Java code, you’ll get the benefit of some specific guarantees made by the Java Memory Model that will ensure you can safely share your immutable objects between threads inside a JVM. But even without solid guarantees about immutability like the JVM can provide, using immutability can provide huge architectural gains. So while Git doesn’t have any means of enforcing strict immutability – I can just edit the contents of something stored under .git/objects, with probable chaos ensuing – utilising it opens up for a very powerful architecture.

When you’re writing multi-threaded Java programs, use the strong guarantees you can get from defining your Java classes correctly, but don’t be afraid to make immutability a core architectural idea even if there is no way to actually formally enforce it.

Micro Level: Building Blocks

I guess the clearest point I can make about immutability, like most of the others who have written about it, is still one about concurrent execution. But I am a believer also in the second point about the simplicity that immutability gives you even if your system isn’t distributed. For me, the essence of that point is that you can disregard any immutable object as a source of weirdness when you’re trying to figure out how the code in front of you is working. Once you have confirmed to yourself that an immutable object is being instantiated correctly, it is guaranteed to remain correct for the rest of the logical flow you’re looking at. You can filter out that object and focus your valuable brainpower on other things. As a former chess player, I see a strong analogy: once you’ve played a bit, you stop seeing three pawns, a rook and a king, you see a king-side castle formation. This is a single unit that you know how it interacts with the rest of the pieces on the board. This allows you to simplify your mental model of the board, reducing clutter and giving you a clearer picture of the essentials. A mutable object resists simplification, so you’ll constantly need to be alert to something that might change it and affect the logic.

you’ve played a bit, you stop seeing three pawns, a rook and a king, you see a king-side castle formation. This is a single unit that you know how it interacts with the rest of the pieces on the board. This allows you to simplify your mental model of the board, reducing clutter and giving you a clearer picture of the essentials. A mutable object resists simplification, so you’ll constantly need to be alert to something that might change it and affect the logic.

On top of the simplicity that the classes themselves gain, by making them immutable, you can communicate your intent as a designer clearly: “this object is read-only, and if you believe you want to change it in order to make some modification to the functionality of this code, you’ve either not yet understood how the design fits together or requirements have changed to such an extent that the design doesn’t support them any longer”. Immutable objects make for extremely solid and easily understood building blocks and give the readers clear signals about their intended usage.

When immutability doesn’t cut the mustard

There are very few things that are all beneficial, and this is of course the case with immutability. So what are the problems you run into with immutability? Well, first of all, no system is interesting without mutable state, so you’ll need at least some objects that are mutable – in Git, branches are a prime example of mutable object, and hence also the one source of conflicts between different repositories. A branch is simply a pointer to the latest commit on that branch, and when you create new commits on a branch, the pointer is updated. In the diagram above, that is reflected by the ‘master’ label having moved to point to the latest commit, but the local copy of where ‘origin/master’ is pointing is unchanged and will remain so until the next push or fetch happens. In the mean time, the actual branch on the origin repository may have changed, so Git needs to handle possible conflicts there. So the branches suffer from the normal cache-related staleness and concurrent update problems, but thanks to most entities being immutable, these problems are confined to a small section of the system.

Another thing you’ll need, which is provided for free by a JVM but not in systems in general, is garbage collection. You’ll keep creating new objects instead of changing the existing ones, and for most kinds of systems, that means you’ll want to get rid of the outdated stuff. Most of the times, that stuff will simply sit on some disk somewhere, and disk space is cheap which means you don’t need to get super-sophisticated with your garbage collection algorithms. In general, the memory allocation and garbage collection overhead that you incur from creating new objects instead of updating existing ones is much less of a problem than it seems at first glance. I’ve personally run into one case where it was clearly impossible to make objects immutable: in Ardor3D, an open-source Java 3D engine, where the most commonly used class is Vector3, a vector of three doubles. This feels like it is a great candidate for being immutable since it is a typical small, simple value object. However, the main task of a 3D engine is to update many thousands of positions of things at least 50 times per second, so in this case, immutability would be completely impossible. Our solution there was to signal ‘immutability intent’ by having the Vector3 class (and some similar classes) implement a ReadOnlyVector3 interface and use that interface when some class needed a vector but wasn’t planning on modifying it.

There’s a lot more solid but semi-concrete advice about why immutability is useful outside of the context of sharing state between threads in Effective Java, so if you’re not convinced about it yet, I would urge you to re-read item 15. Or maybe the whole book, since everything Josh Bloch says is fantastic. To me, the use of immutability in Git shows that removing some aspects of freedom can unlock opportunities in other parts of the system. I also think that you should do everything you can do that will make your code easier for the reader to understand. Reducing mutability can have a game-changing impact on the macro level of your system, and it is guaranteed to help remove mental clutter and thereby improve your code on the micro level.

if (readable) good();

Posted by Petter Måhlén in Java, Software Development on March 12, 2010

Last summer, I read the excellent book Clean Code by Robert Martin and was so impressed that I made it mandatory reading for the coders in my team. One of the techniques that he describes is that rather than writing code like:

if (a != null && a.getLimit() > current) {

// ...

}

you should replace the boolean conditions with a method call and make sure the method name is descriptive of what you think should be true to execute the body of the if statement:

if (withinLimit(a, current)) {

// ...

}

private boolean withinLimit(A a, int current) {

return a != null && a.getLimit() > current;

}

I think that is great advice, because I used to always say that unclear logical conditions is one of the things that really requires code comments to make them understandable. The scenario where you have an if-statement with 3-4 boolean statements AND-ed and OR-ed together is

- not uncommon, and

- frequently a source of bugs, or something that needs changing, and

- is something that stops you dead in your tracks when trying to puzzle out what the code in front of you does.

So it is important to make your conditions easily understandable. Rather than documenting what you want the condition to mean, simply creating a tiny method whose name is exactly what you want the condition to mean makes the flow of the code so much smoother. I also think (but I’m not sure) that it is easier to keep method names in synch with code updates than it is to keep comments up to date.

So that’s advice I have taken onboard when I write code. Yesterday, I found myself writing this:

public void doFilter(ServletRequest request, ServletResponse response, FilterChain chain) {

// ...

if (shouldPerformTracking()) {

executor.submit(new TrackingCallable(servletRequest, trackingService, ctrContext));

}

// ...

}

private boolean shouldPerformTracking() {

// only do tracking if the controller has added a page node to the context

return trackingDeterminator.performTracking() && hasPageNode(ctrContext);

}

private boolean hasPageNode(CtrContext ctrContext) {

return !ctrContext.getEventNode().getChildren().isEmpty();

}

As I was about to check that in, it suddenly struck me that even if the name of the method describing what I meant by the second logical condition was correct (hasPageNode()), it was weird that I had just written a comment explaining just that – what I meant by the logical condition, namely that the tracking should happen if tracking was enabled and the controller had added tracking information to the context. I figured out what the problem was and changed it to this:

private boolean shouldPerformTracking() {

return trackingDeterminator.performTracking() && controllerAddedPageInfo(ctrContext);

}

private boolean controllerAddedPageInfo(CtrContext ctrContext) {

return !ctrContext.getEventNode().getChildren().isEmpty();

}

That was a bit of an eye-opener somehow. The name of the condition-method should describe the intent, not the mechanics of the condition. So the first take on the method name (hasPageNode()) described what the condition checked in a more readable way, but it didn’t describe why that was a useful thing to check. If this type of method names explain the why of the condition, I strongly think they increase code readability and therefore represent a great technique.

As always, naming is hard and worth spending a little extra effort on if you want your code to be readable.

Unit Tests Are Good For You

Posted by Petter Måhlén in Software Development on March 6, 2010

Some 5-6 years ago, I became a mother, biologically implausible as it seems. At the time, I was CTO at Jadestone and that’s when I started to think that programmers writing their own unit tests is a paradigm shift of the same magnitude as object-orientation was in the 90s. So I started trying to convert the developers (and others) to the unit testing mentality, with varying success – some became as convinced as myself, others were very reluctant to write any tests at all, and most seemed to end up in some in-between state: “I guess it’s a good thing to do, and I will if there’s time, but…” It seems like a lot of developers end up somewhere in that middle state, with writing unit tests being something a bit analogous to cleaning your room every week. You know it’s a good thing to do, and you do it because Mum (yep, that’s me) is telling you you have to, but you don’t really want to.

Even at Shopzilla, where the builds fail unless a certain percentage of the code is covered by unit tests (this is a great practice despite the shortfalls of code coverage as a measurement of test quality), there are issues with people’s motivation to write unit tests. Far too much of our code is being ‘exercised’ by the unit tests with no checking of expected results, and/or without sufficient branch coverage.

I remember creating slides at Jadestone with what I still think are really good reasons to write lots of unit tests: it improves the code quality by helping you find bugs, making your code testable forces you to design it in a modular way, having great regression tests gives you the courage to do the aggressive refactoring that is required for long-term productivity, and so on. But after a few years of working with it, I’ve come to the conclusion that the main reason really is – hold your ears, I can feel years of nagging coming out all at once:

IT’S MAKING *YOU* MORE PRODUCTIVE. DAMMIT!

People thinking “I will write unit tests if I have the time” or “when I’m done with the code” are just plain wrong. True, just writing the code needed for a feature or bug fix takes less time than also writing unit tests. But checking that the fix/feature works if it is a part of a slightly complex system? Starting or restarting one of the Shopzilla websites takes something like 3-7 minutes. Once you’re up, you can typically test almost anything by navigating to a single URL, but if you didn’t get the fix right, you have to re-fix, re-build and re-start. If the fix is in a shared library, you need to re-build and re-install the library, then re-build and re-start the site. This takes ages. Usually a lot more than the time it takes to verify the fix with a unit test.

And with multiplayer games, for instance, the situation is even harder. To verify a Shopzilla-style website bug, all you need is normally a single URL to reproduce the problem. With more complex clients, you may have to bring a couple of clients and a server into a given error state in order to reproduce/verify a bug. This can be a nightmare. Even with unit tests, you’ll obviously have to do this, but if you do TDD, you’ll have written a test that reproduces the observed error before trying to fix it. Seeing that the unit test used to be broken but isn’t any longer is great for your confidence and usually means you only need to do the work to reproduce the bug “in reality” once. I’m not sure it is necessary to write tests before you write all your code, but I think it is a good idea to try to do so. And you definitely don’t want to write all your unit tests after you’ve written all the feature code.

I don’t try to convert people into unit tests as much as I used to, but if you do use unit tests properly, not only will you write code that is of higher quality, more modular and easier to refactor to make it support future features and changes. You’ll be adding working features quicker, too. Really, kids, you should clean your rooms every week.

Have I learned something about API Design?

Posted by Petter Måhlén in Java on March 3, 2010

Some years ago, somebody pointed me to Joshua Bloch’s excellent presentation about how to design APIs. If you haven’t watched it, go ahead and do that before reading on. To me, that presentation felt and still feels like it was full of those things where you can easily understand the theory but where the practice eludes you unless you – well, practice, I guess. Advice like “When in doubt, leave it out”, or “Keep APIs free of implementation details” is easy to understand as sentences, but harder to really understand in the sense of knowing how to apply it to a specific situation.

I’m right now working on making some code changes that were quite awkward, and awkward code is always an indication of a need to tweak the design a little. As a part of the awkwardness-triggered tweaking, I came up with an updated API that I was quite pleased with, and that made me think that maybe I’m starting to understand some of the points that were made in that presentation. I thought it would be interesting to revisit the presentation and see if I had assimilated some of his advice into actual work practice, so here’s my self-assessment of whether Måhlén understands Bloch.

First, the problem: the API I modified is used to generate the path part of URLs pointing to a specific category of pages on our web sites – Scorching, which means that a search for something to buy has been specific enough to land on a single product (not just any digital camera, but a Sony DSLR-A230L). The original API looks like this:

public interface ScorchingUrlHelper {

@Deprecated

String urlForSearchWithSort(Long categoryId, Product product, Integer sort);

@Deprecated

String urlForProductCompare(Long categoryId, Product product);

@Deprecated

String urlForProductCompare(String categoryAlias, Product product);

@Deprecated

String urlForProductCompare(Category category, Product product);

@Deprecated

String urlForProductCompare(Long categoryId, String title, Long id, String keyword);

String urlForProductDetail(Long categoryId, Product product);

String urlForProductDetail(String categoryAlias, Product product);

String urlForProductDetail(Category category, Product product);

String urlForProductDetail(Long categoryId, String title, Long id, String keyword);

String urlForProductReview(Long categoryId, Product product);

String urlForProductReview(String categoryAlias, Product product);

String urlForProductReview(Category category, Product product);

String urlForProductReview(Long categoryId, String title, Long id);

String urlForProductReviewWrite(Long categoryId, String title, Long id);

String urlForWINSReview(String categoryAlias, Product product);

String urlForWINSReview(Category category, Product product);

String urlForWINSReview(Long categoryId, String title, Long id, String keyword);

}

Just from looking at the code, it’s clear that the API has evolved over a period and has diverged a bit from its original use. There are some deprecated methods, and there are many ways of doing more or less the same things. For instance, you can get a URL part for a combination of product and category based on different kinds of information about the product or category.

The updated API – which feels really obvious in hindsight but actually took a fair amount of work – that I came up with is:

public interface ScorchingTargetHelper {

Target targetForProductDetail(Product product, CtrPosition position);

Target targetForProductReview(Product product, CtrPosition position);

Target targetForProductReviewWrite(Product product, CtrPosition position);

Target targetForWINSReview(Product product, CtrPosition position);

}

The reason I needed to change the code at all was to add information necessary for tracking click-through rates (CTR) to the generated URLs, so that’s why there is a new parameter in each method. Other than that, I’ve mostly removed things, which was precisely what I was hoping for.

Here are some of the rules from Josh’s presentation that I think I applied:

APIs should be easy to use and hard to misuse.

- Since click tracking, once introduced, will be a core concern, it shouldn’t be possible to forget about adding it. Hence it was made a parameter in every method. People can probably still pass in garbage there, but at least everyone who wants to generate a scorching URL will have to take CTR into consideration.

- Rather than @Deprecate-ing the deprecated methods, the new API is backwards-incompatible, making it far harder to misuse. @Deprecated doesn’t really mean anything, in my opinion. I like breaking things properly when I can, otherwise there’s a risk you don’t notice that something is broken.

- All the information needed to generate one of these URLs is available if you have a properly initialised Product instance – it knows which Category it belongs to, and it knows about its title and id. So I removed the other parameters to make the API more concise and easier to use.

Names matter

- The old class was called ScorchingUrlHelper, and each of its methods called urlForSomething(). But it didn’t create URLs, it created the path parts of URLs (no protocol, host or port parts – query parts are not needed/used for these URLs). The new version returns an (existing) internal representation of something for which a URL can be created called a Target, and the names of the class and methods reflect that.

When in doubt, leave it out

- I removed a lot of ways to create Targets based on subsets of the data about products and categories. I’m not sure that that strictly means that I followed this piece of advice, it’s probably more a question of reducing code duplication and increasing API terseness. So instead of making the API implementation create Product instances based on three different kinds of inputs, that logic was extracted into a ProductBuilder, and something similar for Category objects. And I made use of the fact that a Product should already know which Category it belongs to.

Keep APIs free of implementation details

- Another piece of advice that I don’t think I was following very successfully, but it wasn’t too bad. An intermediate version of the API took a CtrNode instead of a CtrPosition – the position in case is a tree hierarchy indicating where on the page the link appears, and a CtrNode object represents a node in the tree. But that is an implementation detail that shouldn’t really be exposed.

Provide programmatic access to all data available in string form

- Rather than using a String as the return object, the Target was more suitable. This is really a fairly large part of the original awkwardness that triggered the refactoring.

Use the right data type for the job

- I think I get points for this in two ways: using the Product object instead of various combinations of Longs and Strings, and for using the Target as a return type.

There’s a lot of Josh’s advice that I didn’t follow. Much of it relates to his recommendations on how to go about coming up with a good, solid version of the API. I was far less structured in how I went about it. A piece of advice that it could be argued that I went against is:

Don’t make the client do anything the library could do

- I made the client responsible for instantiating the Product rather than allowing multiple similar calls taking different parameters. In this case, I think that avoiding duplication was the more important thing, and that maybe the library couldn’t/shouldn’t do that for the client. Or perhaps, “the library” shouldn’t be interpreted as just “the class in question”. I did add a new class to the library that helps clients instantiate their Products based on the same information as before.

- I made the client go from Target to String to actually get at the path part of the URL. This was more of a special case – the old style ScorchingUrlHelper classes were actually instantiated per request, while I wanted something that could be potentially Singleton scoped. This meant that either I had to add request-specific information as a method parameter in the API (the current subdomain), or change to a Target as the return type and do the rest of the URL generation outside. It felt cleaner to leave that outside, leaving the ScorchingTargetHelper a more focused class with fewer responsibilities and collaborators.

So, in conclusion: do I think that I have actually understood the presentation on a deeper level than just the sentences? Well, when I went through the presentation to write this post, I was actually pleasantly surprised at the number of bullet points that I think I followed. I’m still not convinced, though. I think I still have a lot of things to learn, especially in terms of coming up with concise and flexible APIs that are right for a particular purpose. But at least, I think the new API is an improvement over the old one. And what’s more, writing this post by going back to that presentation and thinking about it in direct relationship to something I just did was a useful exercise. Maybe I learned a little more about API design by doing that!