Posts Tagged Git

Immutability: a constraint that empowers

Posted by Petter Måhlén in Java, Software Development on May 4, 2010

In Effective Java (Second Edition, item 15), Josh Bloch mentions that he is of the opinion that Java programmers should have the default mindset that their objects should be immutable, and that mutability should be used only when strictly necessary. The reason for this post is it seems to me that most Java code I read doesn’t follow this advice, and I have come to the conclusion that immutability is an amazing concept. My theory is that one of the reasons why immutability isn’t as widely used as it should be is that as programmers or architects, we usually want to open up possibilities: a flexible architecture allows for future growth and changes and gives as much freedom as possible to solve coding problems in the most suitable way. Immutability seems to go against that desire for flexibility in that it takes an option away: if your architecture involves immutable objects, you’ve reduced your freedom because those objects can’t change any longer. How can that be good?

The two main motivations for using immutability according to Effective Java is that a) it is easier to share immutable objects between threads and b) immutable objects are fundamentally simple because they cannot change states once instantiated. The former seems to be the most commonly repeated argument in favour of using immutable objects – I think probably because it is far more concrete: make objects immutable and they can easily be shared by threads. The latter, that the immutable objects are fundamentally simple, is the more powerful (or at least more ubiquitous) reason in my opinion, but the argument is harder to make. After all, there’s nothing you can do with an immutable object that you cannot also do with a mutable one, so why constrain your freedom? I hope to illustrate a couple of ways that you both open up macro-level architectural options and improve your code on the micro level by removing your ability to modify things.

Macro Level: Distributed Systems

First, I would like to spend some time on the concurrency argument and look at it from a more general perspective than just Java. Let’s take something that is familiar to anybody who has worked on a distributed system: caching. A cache is a (more) local copy of something where the master copy is for some reason expensive to fetch. Caching is extremely powerful and it is used literally everywhere in computing. The fact that the item in the cache could be different to the master copy, due to either one of them having changed, leads to a lot of problems that need solving. So there are algorithms like read-through, refresh-ahead, write-through, write-behind, and so on, that deal with the problem that the data in your cache or caches may be outdated or in a ‘future state’ when compared to the master copy. These problems are well-studied and solved, but even so, having to deal with stale data in caches adds complexity to any system that has to deal with it. With immutable objects, these problems just vanish. If you have a copy of a certain object, it is guaranteed to be the current one, because the object never changes. That is extremely powerful in designing a distributed architecture.

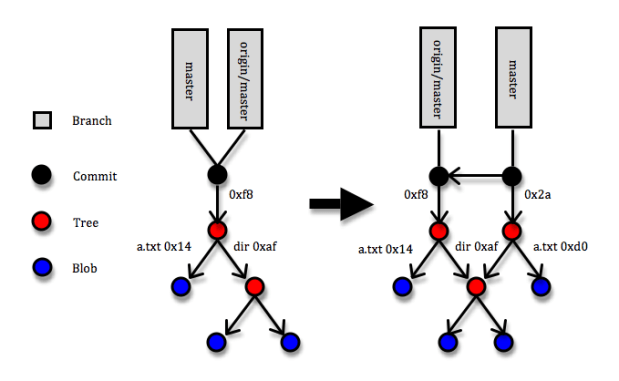

I wonder if I can write a blog post that doesn’t mention Git – apparently not this one at least :). Git is a marvellous example of a distributed architecture whose power comes to a large degree from reducing mutability. Most of the entities in Git are immutable: commits, trees and blobs and all of them are referenced via keys that are SHA-1 hashes of their contents. If a blob (a file) changes, a new entity in Git is created with a new hash code. If a tree (a directory – essentially a list of hash codes and names that identify trees or blobs that live underneath it) changes through a file being added, removed or modified, a new tree entity with new contents and a new hash code is created. And whenever some changes are committed to a Git repository, a commit entity (with a reference to the new top tree entity and some metadata about who and when made the commit, a pointer to the previous commit/s, etc) that describes the change set is created. Since the reference to each object is uniquely defined by its content, two identical objects that are created separately will always have the same key. (If you want to learn more about Git internals, here is a great though not free explanation.) So what makes that brilliant? The answer is that the immutability of objects means your copy is guaranteed to be correct and SHA-1 object references means that identical objects are guaranteed to have identical references and speeds up identity checks – since collisions are extremely unlikely, identical references is the same as objects being identical. Suppose somebody makes a commit that includes a blob (file) with hash code 14 and you fetch the commit to your local Git repository, and suppose further that a blob with hash code 14 is already in your repository. When Git fetches the commit, it’ll come to the tree that references blob 14 and simply note that “I’ve already got blob 14, so no need to fetch it”.

The diagram above shows a simplified picture of a Git repository where a commit is added. The root of the repository is a folder with a file called ‘a.txt’ and a directory called ‘dir’. Under the directory there’s a couple more files. In the commit, the a.txt file is changed. This means that three new entities are created: the new version of a.txt (hash code 0xd0), the new version of the root directory (hash code 0x2a), and the commit itself. Note that the new root directory still points to the old version of the ‘dir’ directory and that none of the objects that describe the previous version of the root directory are affect in any way.

That Git uses immutable objects in combination with references derivable from the content of objects is what opens up possibilities for being very efficient in terms of networking (only transferring necessary objects), local space storage (storing identical objects only once), comparisons and merges (when you reach a point where two trees point to the same object, you stop), etc., etc. Note that this usage of immutable objects is quite different from the one you read about in books like Effective Java and Java Concurrency in Practice – in those books, you can easily get the impression that immutability is something that requires taking some pretty special measures with regard to how you specify your classes, inheritance and constructors. I’d say that is only a part of the truth: if you do take those special measures in your Java code, you’ll get the benefit of some specific guarantees made by the Java Memory Model that will ensure you can safely share your immutable objects between threads inside a JVM. But even without solid guarantees about immutability like the JVM can provide, using immutability can provide huge architectural gains. So while Git doesn’t have any means of enforcing strict immutability – I can just edit the contents of something stored under .git/objects, with probable chaos ensuing – utilising it opens up for a very powerful architecture.

When you’re writing multi-threaded Java programs, use the strong guarantees you can get from defining your Java classes correctly, but don’t be afraid to make immutability a core architectural idea even if there is no way to actually formally enforce it.

Micro Level: Building Blocks

I guess the clearest point I can make about immutability, like most of the others who have written about it, is still one about concurrent execution. But I am a believer also in the second point about the simplicity that immutability gives you even if your system isn’t distributed. For me, the essence of that point is that you can disregard any immutable object as a source of weirdness when you’re trying to figure out how the code in front of you is working. Once you have confirmed to yourself that an immutable object is being instantiated correctly, it is guaranteed to remain correct for the rest of the logical flow you’re looking at. You can filter out that object and focus your valuable brainpower on other things. As a former chess player, I see a strong analogy: once you’ve played a bit, you stop seeing three pawns, a rook and a king, you see a king-side castle formation. This is a single unit that you know how it interacts with the rest of the pieces on the board. This allows you to simplify your mental model of the board, reducing clutter and giving you a clearer picture of the essentials. A mutable object resists simplification, so you’ll constantly need to be alert to something that might change it and affect the logic.

you’ve played a bit, you stop seeing three pawns, a rook and a king, you see a king-side castle formation. This is a single unit that you know how it interacts with the rest of the pieces on the board. This allows you to simplify your mental model of the board, reducing clutter and giving you a clearer picture of the essentials. A mutable object resists simplification, so you’ll constantly need to be alert to something that might change it and affect the logic.

On top of the simplicity that the classes themselves gain, by making them immutable, you can communicate your intent as a designer clearly: “this object is read-only, and if you believe you want to change it in order to make some modification to the functionality of this code, you’ve either not yet understood how the design fits together or requirements have changed to such an extent that the design doesn’t support them any longer”. Immutable objects make for extremely solid and easily understood building blocks and give the readers clear signals about their intended usage.

When immutability doesn’t cut the mustard

There are very few things that are all beneficial, and this is of course the case with immutability. So what are the problems you run into with immutability? Well, first of all, no system is interesting without mutable state, so you’ll need at least some objects that are mutable – in Git, branches are a prime example of mutable object, and hence also the one source of conflicts between different repositories. A branch is simply a pointer to the latest commit on that branch, and when you create new commits on a branch, the pointer is updated. In the diagram above, that is reflected by the ‘master’ label having moved to point to the latest commit, but the local copy of where ‘origin/master’ is pointing is unchanged and will remain so until the next push or fetch happens. In the mean time, the actual branch on the origin repository may have changed, so Git needs to handle possible conflicts there. So the branches suffer from the normal cache-related staleness and concurrent update problems, but thanks to most entities being immutable, these problems are confined to a small section of the system.

Another thing you’ll need, which is provided for free by a JVM but not in systems in general, is garbage collection. You’ll keep creating new objects instead of changing the existing ones, and for most kinds of systems, that means you’ll want to get rid of the outdated stuff. Most of the times, that stuff will simply sit on some disk somewhere, and disk space is cheap which means you don’t need to get super-sophisticated with your garbage collection algorithms. In general, the memory allocation and garbage collection overhead that you incur from creating new objects instead of updating existing ones is much less of a problem than it seems at first glance. I’ve personally run into one case where it was clearly impossible to make objects immutable: in Ardor3D, an open-source Java 3D engine, where the most commonly used class is Vector3, a vector of three doubles. This feels like it is a great candidate for being immutable since it is a typical small, simple value object. However, the main task of a 3D engine is to update many thousands of positions of things at least 50 times per second, so in this case, immutability would be completely impossible. Our solution there was to signal ‘immutability intent’ by having the Vector3 class (and some similar classes) implement a ReadOnlyVector3 interface and use that interface when some class needed a vector but wasn’t planning on modifying it.

There’s a lot more solid but semi-concrete advice about why immutability is useful outside of the context of sharing state between threads in Effective Java, so if you’re not convinced about it yet, I would urge you to re-read item 15. Or maybe the whole book, since everything Josh Bloch says is fantastic. To me, the use of immutability in Git shows that removing some aspects of freedom can unlock opportunities in other parts of the system. I also think that you should do everything you can do that will make your code easier for the reader to understand. Reducing mutability can have a game-changing impact on the macro level of your system, and it is guaranteed to help remove mental clutter and thereby improve your code on the micro level.

Code Sharing: Use Git

Posted by Petter Måhlén in Code Management on March 28, 2010

(This is item 2 in the code sharing cookbook)

Joel Spolsky used what seems to be his last blog post to talk about Git and Mercurial. I like his description of their main benefit as being that they track changes rather than revisions, and like him, I don’t particularly like the classification of them as distributed version control systems. As I’ve mentioned before, the ‘distributed’ bit isn’t what makes them great. In this post, I’ll try to explain why I think that Git is a great VCS, especially for sharing code between multiple teams – I’ve never used Mercurial, so I can’t have any opinions on it. I will use SVN as the counter-example of an older version control system, but I think that in most of the places where I mention SVN, it could be replaced by any other ‘centralised’ VCS.

The by far biggest reason to use Git when sharing code is its support for branching and merging. The main issue at work here is the conflict between two needs: teams need to have complete control of their code and environments in order to be effective in developing their features, and the overall need to detect and resolve conflicting changes as quickly as possible. I’ll probably have to explain a little more clearly what I mean by that.

Assume that Team Red and Team Blue are both working on the same shared library. If they push their changes to the exact same central location, they are likely to interfere with each other. Builds will break, bugs will be introduced in parts of the code supposedly not touched, larger changes may be impossible to make and there will be schedule conflicts – what if Team Blue commits a large and broken change the day before Team Red is going to release? So you clearly want to isolate teams from each other.

On the other hand, the longer the two teams’ changes are isolated, the harder it is to find and resolve conflicting changes. Both volume of change and calendar time are important here. If the volume of changes made in isolation is large and the code doesn’t work after a merge, the volume of code to search in order to figure out the problem is large. This of course makes it a lot harder to figure out where the problem is and how to solve it. On top of that, if a large volume of code has been changed since the last merge, the risk that a lot of code has been built on top of a faulty foundation is higher, which means that you may have wasted a lot of effort on something you’ll need to rewrite.

To explain how long calendar time periods between merges are a problem, imagine that it takes maybe a couple of months before a conflict between changes is detected. At this time, the persons who were making the conflicting changes may no longer remember exactly how the features were supposed to work, so resolving the conflicts will be more complicated. In some cases, they may be working in a totally different team or even have left the company. If the code is complicated, the time when you want to detect and fix the problem is right when you’re in the middle of making the change, not even a week or two afterwards. Branches represent risk and untestable potential errors.

So there is a spectrum between zero isolation and total isolation, and it is clear that the extremes are not where you want to be. That’s very normal and means you have a curve looking something like this:

You have a cost due to team interference that is high with no isolation and is reduced by introducing isolation, and you have a corresponding cost due to the isolation itself that goes up as you isolate teams more. Obviously the exact shape of the curves is different in different situations, but in general you want to be at some point between the extremes, close to the optimum, where teams are isolated enough for comfort, yet merges happen soon enough to not allow the conflict troubles to grow too large.

So how does all that relate to Git? Well, Git enables you to fine-tune your processes on the X axis in this diagram by making merges so cheap that you can do them as often as you like, and through its various features that make it easier to deal with multiple branches (cherry-picking, the ability to identify whether or not a particular commit has gone into a branch, etc.). With SVN, for instance, the costs incurred by frequent merges are prohibitive, partly because making a single merge is harder than with Git, but probably even more because SVN can only tell that there is a difference between two branches, not where the difference comes from. This means that you cannot easily do intermediate merges, where you update a story branch with changes made on the more stable master branch in order to reduce the time and volume of change between merges.

At every SVN merge, you have to go through all the differences between the branches, whereas Git’s commit history for each branch allows you to remember choices you made about certain changes, greatly simplifying each merge. So during the second merge, at commit number 5 in the master branch, you’ll only need to figure out how to deal with (non-conflicting) changes in commits 4 and 5, and during the final merge, you only need to worry about commits 6 and 7. In all, this means that with SVN, you’re forced closer to the ‘total isolation’ extreme than you would probably want to be.

Working with Git has actually totally changed the way I think about branches – I used to say something along the lines of ‘only branch in extreme situations’. Now I think having branches is a good, normal state of being. But the old fears about branching are not entirely invalidated by Git. You still need to be very disciplined about how you use branches, and for me, the main reason is that you want to be able to quickly detect conflicts between them. So I think that branches should be short-lived, and if that isn’t feasible, that relatively frequent intermediate merges should be done. At Shopzilla, we’ve evolved a de facto branching policy over a year of using Git, and it seems to work quite well:

- Shared code with a low rate of change: a single master branch. Changes to these libraries and services are rare enough that two teams almost never make them at the same time. When they do, the second team that needs to make changes to a new release of the library creates a story branch and the two teams coordinate about how to handle merging and releasing.

- Shared code with a high rate of change: semi-permanent team-specific branches and one team has the task of coordinating releases. The teams that work on their different stories/features merge their code with the latest ‘master’ version and tell the release team which commits to pick up. The release team does the merge and update of the release branch and both teams do regression QA on the final code before release. This happens every week for our biggest site.

- Team-specific code: the practice in each team varies but I believe most teams follow similar processes. In my team, we have two permanent branches that interleave frequently: release and master, and more short-lived branches that we create on an ad-hoc basis. We do almost all of our work on the master branch. When we’re starting to prepare a release (typically every 2-3 weeks or so), we split off the release branch and do the final work on the stories to be released there. Work on stories that didn’t make it into the release goes onto the master branch as usual. It is common that we have stories that we put on story-specific branches, when we don’t believe that they will make it into the next planned release and thus shouldn’t be on master.

The diagram above shows a pretty typical state of branches for our team. Starting from the left, the work has been done on the master branch. We then split off the release branch and finalise a release there. The build that goes live will be the last one before merging release back into master. In the mean time, we started some new work on the master branch, plus two stories that we know or believe we won’t be able to finish before the next release, so they live in separate branches. For Story A, we wanted to update it with changes made on the release and master branch, so we merged them into Story A shortly before it was finished. At the time the snapshot is taken, we’ve started preparing the next release and the Story A branch has been deleted as it has been merged back into master and is no longer in use. This means that we only have three branches pointing to commits as indicated by the blueish markers.

This blog post is now far longer than I had anticipated, so I’m going to have to cut the next two advantages of Git shorter than I had planned. Maybe I’ll get back to them later. For now, suffice it to say that Git allows you to do great magic in order to fix mistakes that you make and even extracting and combining code from different repositories with full history. I remember watching Linus Torvalds’ Tech Talk about Git and that he said that the performance of Git was such that it led to a quantum change in how he worked. For me working with Git has also led to a radical shift in how I work and how I look at code management, but it’s not actually the performance that is the main thing, it is the whole conceptual model with tracking commits that makes branching and merging so easy that has led to the shift for me. That Git is also a thousand times (that may not be strictly true…) faster than SVN is of course not a bad thing either.

Centralise Your Sources!

Posted by Petter Måhlén in Code Management, Software Development on February 25, 2010

The fact that Git doesn’t require a central repository but can function as a completely distributed system for version control is often touted as its main benefit. People who prefer having a central repository are sometimes told they just don’t get it. I think that the distributed nature of Git might be a beneficial thing for some teams of people, but I don’t see that it works very well for all situations. Maybe I don’t get it (but of course I think I do :)).

Here’s my concrete example of when I think distributed version control is a bad choice. At work, we have 5-6 teams of developers working on part of a fairly large service-oriented system. These teams work on around 50 different source projects that contain the source code for various services and shared libraries. We’re using Scrum, which means that we want to ensure every team is empowered to make the changes it needs to fully implement a front-end feature. This means that every team has the right to make modifications to any one of these 50 projects. There are of course limits to the freedom – the total number of source projects at Shopzilla is over 100 (maybe closer to 200, I never counted), and the remaining source code is outside of the things that me and my colleagues in other teams are working on. But the subset of our systems I am using as an example consists of probably 50 projects that are worked on by around 20 developers in 5 teams with different goals.

As if this picture wasn’t complicated enough, each team typically has a sprint length of 2 weeks after which finished features go live (although one team does releases every week). Some features are larger than what we can finish in one sprint, so we normally have a few branches for the main project and a couple of libraries that are affected by a large feature. So we’ll have the release branch in case we need a hot fix, master for things going into the next release, and 0-2 story branches for longer stories – per team. Naturally the rate of change varies a lot; some libraries are very stable meaning that they will only have one branch, while the top-level projects (typically corresponding to a shopping comparison site) change all the time and are likely to have at least three branches concurrently being worked on.

Of course, in a situation with so many moving parts, you need to be able to:

- reliably manage what dependencies go into what builds,

- reliably manage what builds are used where,

- detect conflicts between changes and teams quickly, and

- detect regression bugs quickly and reliably.

All that requires quick and easy information exchange between teams about code changes. If we would use distributed version control, we would have to figure out how to consolidate differences between 25-30 developers’ repositories. That is hard and would take a lot of time away from actual coding not to mention adding lots of mistakes due to broken dependencies and configuration management mistakes.

When I say hard, I mean that partly in a mathematical sense. Fully distributed version control is actually an example of a complete graph. That means that the number of possible/necessary communication links between different repositories increases quickly as the number of repositories increases (it is n*(n-1)/2 for those who want the full formula). So, for two people, you only have one link that needs to be updated. If there are three repositories, you need two links, and four repositories leads to six links. When you hit 10 repositories, there are 45 possible ways that code can be pulled, and at 20, 190. This large number of possible ways to get code updates (and get them wrong) is unmanageable. As far as I understand, the practice in teams using distributed source control is to have “code lieutenants” whose role is essentially to divide the big graph of developer repositories into smaller sub-graphs, thereby reducing the number of links.

In the case above, there are 3 * 5(5 – 1)/2 + 3(3-1) /2 = 33 connections between 15 developers instead of 15(15-1) / 2 = 105.

Now, the way it looks with a centralised system is much simpler as the number of developers increases. This corresponds to a star graph, where the number of connections is equal to the number of developers.

I guess it is conceivable that with say 25 developers you could handle the number of connections required to manage the source code for a few repositories even with distributed code management. But when you have a situation with 50 source projects and a number of branches, I think you need to do everything in your power to remove necessary interaction paths.

Even supposing that the communication problems are less serious than I think they are, I’m unsure of the advantages to using a distributed version control system in a corporate setting. Well, why beat about the bush: I don’t see any. Wikipedia has an article listing some more or less dubious advantages of distributed source control, where many of them relate to an implementation detail of Git rather than distributed source control as such (the use of the local repository that saves a lot of network overhead and speeds up many commands). For me, the main advantage of centralised source control in a corporate setting is that you really want to be able to quickly and reliably confirm that teams that are supposed to be working together actually are. Centralised source control lends itself to continuous integration with heavy usage of automated testing to detect conflicting changes that lead to build failures or regression bugs. Distributed source control doesn’t.

We’re using Git at Shopzilla, and I’m loving it. For me, the main reason not to use Git is that it is hard to understand. I guess one obstacle to understanding Git is that its distributed nature is new and different, but I kind of feel that the importance of that is overstated and therefore it should be deemphasised. The other main hurdle, that it has “a completely baffling user interface that makes perfect sense IF YOU’RE A VULCAN” is a harder problem to get around. But if you work with a tool 8 hours a day, you’ll learn to use it even if it is complicated. What I love about Git isn’t that it is distributed, but that:

- It is super-fast.

- It’s architecture is fundamentally right – a collection of immutable objects identified by keys that are derived from the objects (the hash codes), with a set of labels (branches, tags, etc.) pointing into relevant places. This just works for a VCS.

- Its low-level nature and the correctness of the architecture means that when I make mistakes, I can always fix them. And there seems to be no way of getting a Git repository into a corrupt state even when you manually poke its internals.

- It excels at managing branches – I think the way we’re working at Shopzilla is right for us, and I think that if we had stayed with SVN, we couldn’t do it.

Git and Maven

Posted by Petter Måhlén in Code Management, Software Development on February 20, 2010

There was a recent comment to a bug I posted in the Maven Git SCM Provider that triggered some thoughts. The comment was:

“GIT is a distributed SCM. There IS NO CENTRAL repository. Accept it.

Doing a push during the release process is counter to the GIT model.”

In general, the discussions around that bug have been quite interesting and very different from what I expected when I posted it. My reason for calling it a bug was that an unqualified ‘push‘ tries to push everything in your local git repository to the origin repository. That can fail for some branch that you’ve not kept up to date even if it is a legal operation for the branch that you’re currently doing a release of. Typically, that other branch has moved a bit, so your version is a couple of commits behind. A push in that state will abort the maven release process and leave you with some pretty tricky cleaning up to do (edit: Marta has posted about how to fix that). A lot of people commenting on the bug have made comments about how Git is distributed and therefore push shouldn’t be done at all, or be made optional.

I think that the issue here is that there is an impedance mismatch between Git and Maven. While Git is a distributed version control system – that of course also supports a centralised model perfectly well – the Maven model is fundamentally a centralised one. This is one case where the two models conflict, and my opinion is that the push should indeed happen, just in a way that is less likely to break. The push should happen because when doing a Maven release, supporting Maven’s centralised model is more important than supporting Git’s distributed model.

The main reason why Maven needs to be centralised is the way that artifact versions are managed. If releasing can be done by different people from local repositories without any central coordination, there is a big risk of different people creating artifact versions that are not the same. The act of creating a Maven release is in fact saying that “This binary package is version 2.1 of this artifact, and it will never change”. There should never be two versions of 2.1. Git of course gets around this problem using hashes of the things it version controls instead of sequential numbers, and if two things are identical, they will have the same hash code = the same version number. Maven produces artifacts on a higher conceptual level, where sequential version numbers are important, so there needs to be a central location that determines what is the next version number to use and provides a ‘master’ copy of the published artifacts.

I’ve also thought a bit about centralised versus distributed version management and when the different choices might work, but I think I’ll leave that for another post at another time (EDIT: that time was now). Either way, I think that regardless of the virtues of distributed version management systems like Git, Maven artifacts need to be managed centrally. It would be interesting to think about what a distributed dependency management system would look like…