Petter Måhlén

Just a programmer

Homepage: https://pettermahlen.wordpress.com

DCI Architecture – Good, not Great, or Both?

Posted in Software Development on September 10, 2010

A bit more than a week ago, my brother sent me a couple of links about DCI. The vision paper, a presentation by James Coplien and some other stuff. There were immediately some things that rang true to me, but there were also things that felt weird – in that way that means that either I or the person who wrote it has missed something. Since then, I’ve spent a fair amount of time trying to understand as much as I can of the thoughts behind DCI. This post, which probably won’t make a lot of sense unless you’ve read some of the above materials, is a summary of what I learned and what I think is incomplete about the picture of DCI that is painted today.

The Good

I immediately liked the separation of data, context and roles (roles are not part of the acronym, but are stressed much more than interactions in the materials I’ve found). It was the sort of concept that matches your intuitive thinking, but that you haven’t been able to phrase, or perhaps not even grasped that there was a concept there to formulate. I have often felt that people attach too much logic to objects that should be dumb (probably in an attempt to avoid anemic domain models, something I, apparently like Mr Coplien, have not quite understood why it is such a horror). Thinking of objects as either containing data or playing some more active role in a context makes great sense, and makes it easier to figure out which logic should go where. And I second the opinion that algorithms shouldn’t be scattered in a million places – at least in the sense that at a given level of abstraction, you should be able to get an overview of the whole algorithm in some single place.

Another thing I think is great is looking at the mapping between objects in the system and things in the user’s mental model. That mapping should be intuitive in order to make it very easy to understand what to do in order to achieve a certain result. Of course, in any system, there are a lot of objects that are completely uninteresting for – and hopefully invisible to – end users, but very central to another kind of software users: programmers. Thinking about DCI has made me think that it is central both to have a good mapping between the end user’s mental model and the objects that s/he needs to interact with and the programmer’s mental model and the objects that s/he needs to interact with and understand. And DCI talks about dumb data objects, which I think is right from the perspective of creating an intuitive mapping. I think most people tend to think of some things as more passive and others as active. So a football can bounce, but it won’t do so of its own accord. It will only move if it is kicked by a player, an actor. It probably won’t even fall unless pulled downwards by gravity, another active object. It’s not exactly dumb, because bouncing is pretty complicated, it just doesn’t do much unless nudged, and certainly not in terms of coordinating the actions of other objects around it. The passive objects are the Data in DCI, and the active objects are the algorithms that coordinate and manipulate the passive objects.

The notion of having a separate context for each use case, and ‘casting’ objects to play certain roles in that context, feels like another really good idea. What seems to happen in most of the systems I’ve seen is that you do some wiring of objects either based on the application scope or, in a web service, occasionally the request scope. Then you don’t get more fine-grained with how you wire up your object graph, so typically, there are conditional paths of logic within the various objects that allow them to behave differently based on user input. So for every incoming request, say, you have the exact same object graph that needs to be able to handle it differently based on the country the user is in, whether he has opted to receive email notifications, etc. This is far less flexible and testable than tailoring your object graph to the use case at hand, connecting exactly the set of objects that are needed to handle it, and leaving everything else out.

The Not Great

The thing that most clearly must be broken in the way that James Coplien presents DCI is the use in some languages of static class composition. That is, at compile time, each domain object declares which roles it can play. This doesn’t scale to large applications or systems of applications because it creates a tight coupling between layers that should be separated. Coplien talks about the need for separating things that change rarely from those that change often when he discusses ‘shear layers’ in architecture, but doesn’t seem to notice that the static class composition actually breaks that. You want to have relatively stable foundations at the bottom layers of your systems, with a low rate of change, and locate the faster-changing bits higher up where they can be changed in isolation from other parts of the system. Static class composition means that if two parts of the system share a domain class, and that class needs to play a new role in one, the class will have to change in the other as well. This leads to a high rate of change in the shared code, which is problematic. Dynamic class composition using things like traits in Scala or mixins feels like a more promising approach.

But anyway, is class composition/role injection really needed? In the examples that are used to present DCI – the spell checker and the money transfer – it feels like they are attaching logic to the wrong thing. To me, as a programmer with a mental model of how things happen in the system, it feels wrong to have a spell checking method in a text field, or a file, or a document, or a selected region of text. And it feels quite unintuitive that a source account should be responsible for a money transfer. Spell checking is done by a spell checker, of course, and that spell checker can process text in a text field, a file, a document or a selected region. A spell checker is an active or acting object, an object that encodes an algorithm, as opposed to a passive one. Similarly, an account doesn’t transfer money from itself to another, or from another to itself. That is done by something else (a money transferrer? probably more like a money transaction handler), again an active object. So while I see the point of roles and contexts, I don’t see the point of making a dumb, passive, data object play a role that is cut out for an active, smart object.

My Thoughts

First, I haven’t yet understood how Dependency Injection, DI, is ‘one of the blind men that only see a part of the elephant’. To me, possibly still being blind, it seems like DI can work just as well as class composition. Here’s what the money transfer example from the DCI presentation at QCon in London this June looks like with Java and DI:

interface SourceAccount {...}

interface DestinationAccount {...}

class SavingsAccount implements SourceAccount, DestinationAccount {...}

class PhoneBill implements DestinationAccount {...}

public class MoneyTransactionHandler {

private final GUI gui;

public MoneyTransactionHandler(GUI gui) {

this.gui = gui;

}

public void transfer(Currency amount,

SourceAccount source,

DestinationAccount destination) {

beginTransaction();

if (source.balance() < amount) {

throw new InsufficientFundsException();

}

source.decreaseBalance(amount);

destination.increaseBalance(amount);

source.updateLog("Transfer out", new Date(), amount);

destination.updateLog("Transfer in", new Date(), amount);

gui.displayScreen(SUCCESS_DEPOSIT_SCREEN);

endTransaction();

}

}

This code is just as readable and reusable as the code in the example, as far as I can tell. I don’t see the need for class composition/role injection where you force a role that doesn’t naturally fit it upon an object.

Something that resonates well with some thoughts I’ve had recently around how we should think about the way we process requests is using contexts as DI scopes. At Shopzilla, every page that is rendered is composed of the results of maybe 15-20 service invocations. These are processed in parallel, and rendering of the page starts as soon as service results for the top of the page are available. That is great, but there are problems with the way that we’re dealing with this. All the data that is needed to render parts of the page (we call them pods) needs to be passed as method parameters as pods are typically singleton-scoped. In order to get a consistent pod interface, this means that the pods take a number of kitchen sink objects – essentially maps containing all of the data that was submitted as a part of the URL, anything in the HTTP session, the results of all the service invocations, and things that are results of logic that was previously executed while processing the request. This means that everything has access to everything else and that we get occasional strange side effects, that things are harder to test than they should be, etc. If we would look at the different use cases as different DI scopes, we could instead wire up the right object graph to deal with the use case at hand and fire away, each object getting exactly the data it needs, no more, no less. It seems like it’s easy to get stuck on the standard DI scopes of ‘application’, ‘request’, and so on. Inventing your own DI scopes and wiring them up on the fly seems like a promising avenue, although it probably comes with some risk in terms of potentially making the wiring logic overly complex.

In some of the material produced by DCI advocates, there is a strong stress of the separation between ‘object-oriented’ and ‘class-oriented’ programming, and class-oriented is bad. This is a distinction I don’t get. Or, the distinction I see feels too trivial to be made into a big deal. Of course objects are instances of a class, and of course the system’s runtime state in terms of objects and their relationships is the thing that makes it work or not. But who ever said anything different? There must be something that I’m missing here, so I will have to keep digging at it to figure out what it is.

DCI + DI

I think the spell-checker example that James Coplien uses in his presentations can be used in a great way to show how DCI and DI together make a harmonious combo. If you need to do spell checking, you probably need to be able to handle different languages. So you could have a spell checker for English, one for French and one for Swedish. Exactly which one you would choose for a given file, text field, document or region of text is probably something that you can decide in many ways: based on the language of the document or text region, based on some application setting, or based on the output of a language detector. A very naive implementation would make the spell checker a singleton and let it figure out which language to use. This ties the spell checker tightly to application-specific things such as configuration settings, document metadata, etc., and obviously doesn’t aid code reuse. A less naive solution might take a language code as a parameter to the singleton spell checker, and let the application figure out what language to pass. This becomes problematic when you realise that you may want to spell check English (US) differently from English (UK) and therefore language isn’t enough, you need country as well – in the real world, there are often many additional parameters like this to an API. By making the spell checker object capable of selecting the particular logic for doing spell checking, it has been tied to application-specific concerns. Its API will frequently need to change when application-level use cases change, so it becomes hard to reuse the spell-checker code.

A nicer approach is to have the three spell checkers as separate objects, and let a use-case-specific context select which one to use (I’m not sure if the context should figure out about the language or if that should be a parameter to the context – probably, contexts should be simple, but I can definitely think of cases where you might want some logic there). All the spell checker needs is some text. It doesn’t care about whether it came from a file or a selected region of text, and it doesn’t care what language it is in. All it does is check the spelling according to the rules it knows and return the result. For this to work, there needs to be a few application-specific things: the trigger for a spell checking use case, the context in which the use case executes (the casting or wiring up of the actors to play different roles), and the code for the use case itself. In this case, the ‘use case’ code probably is something like:

public class SpellCheckingUseCase {

private final Text text;

private final SpellChecker spellChecker;

private final Reporter reporter;

// constructor injection ...

public void execute() {

SpellCheckingResult result = spellChecker.check(text);

reporter.display(result);

}

}

The reporter role could be played by something that knows how to render squiggly red lines in a document or print out a list of misspelled words to a file or whatever.

In terms of layering and reuse, this is nice. The contexts are use-case specific and therefore at the very top layer. They can reuse the same use case execution code, but the use case code is probably too specific to be shared between applications. The spell checkers, on the other hand, are completely generic and can easily be reused in other applications, as is the probably very fundamental concept of a text. The Text class and the spell checkers are unlikely to change frequently because language doesn’t change that much. The use case code can be expected to change, and the contexts that trigger the use case will probably change very frequently – need to check spelling when a document is first opened? Triggered by a button click? A whole document? A region, or just the last word that was entered? Use different spell checkers for different parts of a document?

I think this is a great example of how DCI helps you get the right code structure. So I like DCI on the whole – but I still don’t get the whole classes vs objects discussion and why it is such a great thing to do role injection. Let the algorithm-encoding objects be objects in their own right instead.

Composition vs Inheritance

Posted in Java on August 20, 2010

One of the questions I often ask when interviewing candidate programmers is “what is the difference between inheritance and composition”. Some people don’t know, and they’re obviously out right then and there. Most people explain that “inheritance represents an is-a relationship and composition a has-a”, which is correct but insufficient. Very few people have a good answer to the question that I’m really asking: what impact does the choice of one or the other have on your software design? The last few years it seems there has been a trend towards feeling that inheritance is over-used and composition to a corresponding degree under-used. I agree, and will try to show some concrete reasons why that is the case. (As a side note, the main trigger for this blog post is that about a year ago, I introduced a class hierarchy as a part of a refactoring story, and it was immediately obvious that it was an awkward solution. However, it (and the other changes) represented a big improvement over what went before, and there wasn’t time to fix it back then. It’s bugged me ever since, and thanks to our gradual refactoring, we’re getting closer to the time when we can put something better in place, so I started thinking about it again.)

One guy I interviewed the other day had a pretty good attempt at describing when one should choose one or the other: it depends on which is closest to the real world situation that you’re trying to model. That has the ring of truth to it, but I’m not sure it is enough. As an example, take the classically hierarchical model of the animal kingdom: Humans and Chimpanzees are both Primates, and, skipping a bunch of steps, they are Animals just like Kangaroos and Tigers. This is clearly a set of is-a relationships, so we could model it like so:

public class Animal {}

public class Primate extends Animal {}

public class Human extends Primate {}

public class Chimpanzee extends Primate {}

public class Kangaroo extends Animal {}

public class Tiger extends Animal {}

Now, let’s add some information about their various body types and how they walk. Both Humans and Chimpanzees have two arms and two legs, and Tigers have four legs. So we could add two legs and two arms at the Primate level, and four legs at the Tiger level. But then it turns out that Kangaroos also have two legs and two arms, so this solution doesn’t work without duplication. Perhaps a more general concept involving limbs is needed at the animal level? There would still be duplication of the ‘legs + arms’ classification in both Kangaroo and Primate, not to mention what would happen if we introduce a RattleSnake into our Eden (or an EarthWorm if you prefer an animal that has never had limbs at any stage of its evolutionary history).

A similar problem is shown by introducing a walk() method. Humans do a bipedal walk, Chimpanzees and Tigers walk on all fours, and Kangaroos hop using their rear feet and tail. Where do we locate the logic for movement? All the animals in our sample hierarchy can walk on all fours (although humans tend to do so only when young or inebriated), so having a quadrupedWalk() method at the Animal level that is called by the walk() methods of Tiger and Chimpanzee makes sense. At least until we add a Chicken or Halibut, because they will now have access to a type of locomotion they shouldn’t.

The root problem here is of course that the model is bad even though it seems like a natural one. If you think about it, body types and locomotion are not defined by the various creatures’ positions in the taxonomy – there are both mammals and fish that swim, and both fish, lizards and snakes can be legless. I think it is natural for us to think in terms of templates and inherited properties from a more general class (Douglas Hofstadter said something very similar, I think in Gödel, Escher, Bach). If that kind of thinking is a human tendency, that could explain why it seems so common to initially think that a hierarchical model is the best one. It is often a mistake to assume that the presence of an is-a relationship in the real world means that inheritance is right for your object model.

But even if, unlike the example above, a hierarchical model is a suitable one to describe a certain real-world scenario, there are issues. A big one, in my opinion, is that the classes that make up a hierarchical model are very tightly coupled. You cannot instantiate a class without also instantiating all of its superclasses. This makes them harder to test – you can never ‘mock’ a superclass, so each test of a leaf in the hierarchy requires you to do all the setup for all the classes above. In the example above, you might have to set up the limbs of your RattleSnake and the data needed for the quadrupedWalk() method of the Halibut before you could test them.

With inheritance, the smallest testable unit is larger than it is when composition is used and you increase code duplication in your tests at least. In this way, inheritance is similar to use of static methods – it removes the option to inject different behaviour when testing. The more code you try to reuse from the superclass/es, the more redundant test code you will typically get, and all that redundancy will slow you down when you modify the code in the future. Or, worse, it will make you not test your code at all because it’s such a pain.

There is an alternative model, where each of the beings above have-a BodyType and possibly also have-a Locomotion that knows how to move that body. Probably, each of the animals could be differently configured instances of the same Animal class.

public interface BodyType {}

public TwoArmsTwoLegs implements BodyType {}

public FourLegs implements BodyType {}

public interface Locomotion<B extends BodyType> {

void walk(B body);

}

public class BipedWalk implements Locomotion<TwoArmsTwoLegs> {

public void walk(TwoArmsTwoLegs body) {}

}

public class Slither implements Locomotion<NoLimbs> {

public void walk(NoLimbs body) {}

}

public class Animal {

BodyType body;

Locomotion locomotion;

}

Animal human = new Animal(new TwoArmsTwoLegs(), new BipedWalk());

This way, you can very easily test the bodies, the locomotions, and the animals in isolation of each other. You may or may not want to do some automated functional testing of the way that you wired up your Human to ensure that it behaves like you want it to. I think composition is almost universally preferable to inheritance for code reuse – I’ve tried to think of cases where the only natural model is a hierarchical one and you can’t use composition instead, but it seems to be beyond me.

In addition to the arguments here, there’s the well-known fact that inheritance breaks encapsulation by exposing subclasses to implementation details in the superclass. This makes the use of subclassing as a means to integrate with a library a fragile solution, or at least one that limits the future evolution of the library.

So, there it is – that summarises what I think are the differences between composition and inheritance, and why one should tend to prefer composition over inheritance. So, if you’re reading this because you googled me before an interview, this is one answer you should get right!

Finding Duplicate Class Definitions Using Maven

Posted in Software Development on August 5, 2010

If you have a largish set of internal libraries with a complex dependency graph, chances are you’ll be including different versions of the same class via different paths. The exact version of the class that gets loaded seems to depend on the combination of JVM, class loader and operating system that happens to be used at the time. This can cause builds to fail on some systems but not others and is quite annoying. When this has been happening to me, it’s usually been for one of two reasons:

- We’ve been restructuring our internal artifacts, and something was moved from artifact A to B, only the project in question is still on a version of artifact A that is “pre-removal”. This often leads to binary incompatibilities if the class has evolved since being moved to artifact B.

- Two artifacts in the dependency graph have dependencies on artifacts that, while actually different as artifacts, contain class files for the same class. This can typically happen with libraries that provide jar distributions that include all dependencies, or where there are distributions that are partial or full.

On a couple of previous occasions, when trying to figure out how duplicate class definitions made it into projects I’ve been working on, I’ve gone through a laborious manual process to list class names defined in jars, and see which ones are repeated in more than one. I thought that a better option might be to see if that functionality could be added into the Maven dependency plugin.

My original idea was to add a new goal, something like ‘dependency:duplicate-classes’, but when looking a little more closely at the source code of the dependency plugin, I found that the dependency:analyze goal had all the information needed to figure out which classes are defined more than once. So I decided to make a version of the maven-dependency-plugin where it is possible to detect duplicate class definitions using ‘mvn dependency:analyze’.

The easiest way to run the updated plugin is like this:

mvn dependency:analyze -DcheckDuplicateClasses

The output if duplicate classes are found is something like:

[WARNING] Duplicate class definitions found: [WARNING] com.shopzilla.common.data.ObjectFactory defined in: [WARNING] com.shopzilla.site.url.c14n:model:jar:1.4:compile [WARNING] com.shopzilla.common.data:data-model-schema:jar:1.23:compile [WARNING] com.shopzilla.site.category.CategoryProvider defined in: [WARNING] com.shopzilla.site2.sasClient:sas-client-core:jar:5.47:compile [WARNING] com.shopzilla.site2.service:common-web:jar:5.50:compile

If you would like to try the updated plugin on your project, here’s how to do it:

- Get the forked code for the dependency analyzer goal from http://github.com/pettermahlen/maven-dependency-analyzer-fork and install it in your local Maven repo by running ‘mvn install’. (It appears that for some people, the unit tests fail during this process – I’ve not been able to reproduce this, and it’s not the tests that I wrote, so in this case my recommendation would be to simply use -DskipTests=true to ignore them).

- Get the forked code for the dependency plugin from http://github.com/pettermahlen/maven-dependency-plugin-fork and install it in your local Maven repo by running ‘mvn install’.

- Update your pom.xml file to use the forked version of the dependency plugin (it’s probably also possible to use the plugin registry, but I’ve not tested that):

<build>

<pluginManagement>

<plugins>

<plugin>

<artifactId>maven-dependency-plugin</artifactId>

<version>2.2.PM-SNAPSHOT</version>

</plugin>

</plugins>

</pluginManagement>

</build>

I’ve filed a JIRA ticket to get this feature included into the dependency plugin – if you think it would be useful, it might be a good idea to vote for it. Also, if you have any feedback about the feature, feel free to comment here!

Code Sharing Wrap-up

Posted in Software Development on July 25, 2010

As I suspected, when I planned to write a series of posts about code sharing, I’ve realised that I won’t write them all. The main reason is that I started out with the juiciest bits, where I felt I had something interesting to say, and the rest of the subjects feel too dry and I don’t think I can write interesting posts about them individually. So I’ll lump them together and describe briefly what I mean by them in this wrap-up post instead.

The bullet points that I don’t think are ‘big’ enough to warrant individual posts are:

- Use JUnit.

- Use Hudson.

- Manage Dependencies.

- Communicate.

Let’s tackle them one by one. The first one, ‘Use JUnit’, is not so much intended to say that JUnit is the only unit testing framework out there (TestNG is as good, in my opinion). It is rather a statement about the importance of good automated tests when sharing code. The obvious motivation is that almost every conflicting change between two teams is a regression error and therefore possible to catch with automated tests. If each team ensures that the use cases they want from a shared library are tested automatically (note that I don’t call the tests unit tests; they are more functional than unit tests) with each build, they can guard their desired functionality from breaking due to changes made by another team. A functional test that is broken intentionally due to a change desired by one team should trigger communication between teams to ensure that it is changed in a way that works for all clients of the library.

I’ve never tried formalising the use of different sets of functional tests owned by different clients of a library as opposed to just having a single comprehensive set of unit tests. But it feels like a potentially quite attractive proposition, so it might be interesting to try. It might require some work in terms of getting it into the build infrastructure in a good way. I’d love to be able to see how that works at some point, but simply having a single comprehensive set of unit tests works really well in terms of guarding functionality, too.

‘Use Hudson’ says that continuous integration (CI) is vital when sharing code. It feels like everybody knows that these days, so I don’t think I need to make the case for CI in general. In the context of sharing libraries, the obvious benefit of CI is that you will detect failures sooner than you would have if you just rely on individual developers’ builds. This is especially true of linkage-type errors. You’ll catch most errors that would break a unit test in the library you’re working on by just running the build locally, but CI servers tend to be better at checking that the library works with the latest snapshots of related libraries and vice versa. Of the CI servers I’ve used (includes Continuum and Cruise Control), Hudson has been by a wide margin the best. Hudson’s strength relative to the others is primarily in the ease of managing build lines – the way we use it, anybody can and does create and modify builds for some project almost weekly. I haven’t used the others in a couple of years, so it may have changed, but earlier what you can do in 30 seconds with Hudson used to take at least an hour or more depending on how well you remember the tricks to use with them.

I think that I touched on most of the arguments I wanted to make about ‘Manage Dependencies’ in the post I wrote titled Divide and Conquer. Essentially, the graph of dependencies between shared libraries that you introduce is something that is going to be very hard and expensive to change, so it is well worth spending some time thinking hard about what it should be like before you finalise it. The Divide and Conquer post contains some more detail on what makes it hard to evolve that graph as well as some tips about how to get it right.

The final point is ‘Communicate’. I sometimes think that communication is the hardest thing that two people can try to do, and of course it gets quadratically harder as you add more people. It is interesting to note how much of business hierarchies and processes are aimed at preventing or fixing communication problems. In the particular case of code sharing, the most important communication problems to solve are:

- Proactive notifications – if one team is going to make a change to a shared library, many problems can easily be avoided if other teams are notified before those changes are made so that they get the opportunity to give feedback about how that change might affect them. At Shopzilla, we’re using a mailing list where each team is obliged to send three kinds of messages:

- After each sprint planning session, a message saying either “We’re not planning to make any changes to shared code”, or “We’re anticipating making the following changes to shared code: a), b) and c)”. The point of always sending an email is that it is very easy to forget about this type of communication, so always having to do it should mean forgetting it less often.

- If a need to make changes is detected later than sprint planning (which happens often), a specific notification of that.

- If changes have been made by another team that led to problems, a description of the changes and problems. This is so that we can continuously improve, not in order to point fingers at people that misbehave.

- Understanding requirements and determining correct solutions – it is often not obvious from just looking at some code why it has been implemented the way it is. In that scenario, it is important to have an easy way of getting hold of the person/people that have written the code to understand what requirements they were trying to meet when writing it so that one can avoid breaking things when making modifications. This is often made harder by client evolution: shared code may not be modified to remove some feature as clients stop using it, so dead code is relatively common. Again, I think that a mailing list (or one per some sub-category of shared code) is a useful tool.

- Last but probably most important: a collaborative mindset – this is arguably not ‘just’ a communication problem, but it can definitely be a problem for communication. It is possible to get into a tragedy of the commons-type situation, where the shared code is mismanaged because everybody focuses primarily on their own products’ needs rather than the shared value. This can manifest itself in many ways, from poor implementations of changes in the shared code, to lack of responsiveness when there is a need for discussions and decisions about how to evolve it. To get the benefits of sharing, it is crucial that the teams sharing code want to and are allowed to spend enough time on shared concerns.

So, that concludes the code sharing series. In summary, it’s a great thing to do if done right, but there’s a lot of things that can go wrong in ways that you might not expect beforehand – the benefits of sharing code are typically more obvious than the costs.

Making No Mistakes is a Mistake

Posted in Software Development on June 9, 2010

Here’s one of my favourite diagrams:

What it shows is an extremely common situation – an axis, where one type of cost is very large at one end of the scale (red line), and a conflicting type of cost is very large at the other end of the scale (green line). The total cost (purple line) has an optimum somewhere between the extremes. I mentioned one such situation when talking about teams working in isolation or interfering with each other. To me, another example is doing design up-front. If you don’t do any design before starting to write a significant piece of code, you’re likely to go very wrong – if nothing else, in terms of performance or scalability, which are hard to engineer in at a late stage. But on the other hand, Big Design Up Front typically means that you waste a lot of time solving problems that, once you get down to the details, turn out never to have needed solutions. It’s also easier to come up with a good design in the context of a partially coded, conctrete system than for something that is all blue-prints and abstract. So at one end of the scale, you do no design before you write code, and you run a risk of incurring huge costs due to mistakes that could have been avoided by a little planning. And at the other end of the scale, you do a lot of design before coding, and you probably spend way too much time agonizing over perceived problems that you in fact never run into once you start writing the code. And somewhere in the middle is the sweet spot where you want to be – doing lagom design to use a Swedish term.

The diagram shows a useful way of thinking about situations for two reasons: first, these situations are very common, so you can use it often, and second, people (myself very much included) often tend to see only one of the pressures. “If we don’t write this design document, it will be harder for new hires to understand how the system works, so we must write it!” True, but how often will we have new hires that need to understand the system, how much easier will a design document make this understanding, and how much time will it take to write the document and keep it up to date? A lot of the time, the focus on one of these pressures comes from a strong feeling of what is “the proper way to do things”, which can lead to flame wars on the web or heated debates within a company or team. An awareness of the fact that costs are not absolute – it is not the end of the world if it takes some time for a new hire to understand the system design, and a document isn’t going to make that cost zero anyway – and of the fact that there is a need to weigh two types of costs against each other can make it easier to make good decisions and have productive debates.

For me, a particularly interesting example of conflicting pressures is where work processes are concerned. I think it is fair to say that they are introduced to prevent mistakes of different kinds. With inadequate processes, you run a risk of losing a lot of time or money: lack of reporting leads to lack of management insight into a project, which results in worse decisions, lack of communication means that two people in different parts of the same room write two almost identical classes to solve the same problem, lack of quality assurance leads to poorly performing products and angry customers, etc. On the other hand, every process step you introduce comes with some sort of cost. It takes time to write a project report and time to read it. Somebody must probably be a central point of communication to ensure that individual programmers are aware of the types of problems that other programmers are working on, and verifying that something that “has always worked and we didn’t change” still works can be a big effort. So we have conflicting pressures: mistakes come with a cost, and process introduced to prevent those mistakes comes with some overhead.

As a side note, not all process steps are equal in terms of power-to-weight ratio. A somewhat strained example could be of a manager who is worried about his staff taking too long lunch breaks. He might require them to leave him written notes about when they start and finish their lunch. The process step will give him some sort of clearer picture of how much time they spend, but it comes at a cost in time and resentment caused by writing documents perceived as useless and an impression of a lack of trust. An example of a good power-to-weight ratio could be the daily standup in Scrum, which is a great way of ensuring that team members are up to date with what others are doing without wasting large amounts of time on it.

Getting back to the main point: when adopting or adapting work processes, we’ll want to find a sweet spot where we have enough process to ensure sufficient control over our mistakes, but where we don’t waste a lot of time doing things that don’t directly create value. Kurt Högnelid, who was my boss in 1998-1999, and who taught me most of what I know about managing people, pointed out something to me: at the sweet spot, you will make mistakes! This is counter-intuitive in some ways. During a retrospective, we tend to want to find out what went wrong and take action to prevent the same thing from happening again. But this diagram tells us that is sometimes wrong – if we have a situation where we never make mistakes, we’re in fact likely to be wasteful in that we’re probably overspending on prevention.

Different mistakes are different. If the product at hand is a medical system, it is likely that we want to ensure that we never make mistakes that lead it to be broken in ways that might harm people. The same thing is true of most systems where bugs would lead to large financial loss. But I definitely think that there is a tendency to overspend on preventing simple work-related mistakes through for instance too much planning or documentation, where rare mistakes are likely to cost less than the continuous over-investment in process. It takes a bit of daring to accept that things will go wrong and to see that as an indication of having found the right balance.

Code Sharing: Divide and Conquer

Posted in Code Management on June 3, 2010

When I was at Jadestone, one of the objectives that the CEO explicitly gave me was to come up with a great way to share code between our products. I spent a fair amount of time thinking about and working on how to do that, but I left the company before most of the ideas came to be fully used throughout the company. Just over a year later, I joined Shopzilla, and found that to a very large extent the same ideas that I had introduced as concepts at Jadestone were being used or recommended in practice there. So a lot of the articles I’ve written about code sharing describe ideas and practices from Jadestone and Shopzilla, although focusing specifically on things I personally find important. This one will cover some areas were I think we still have a bit of work to do to really nail things at Shopzilla, although we probably have the beginnings of many of the practices down.

You can’t include all your code into every top-level product you build. This means you’ll need to break your ecosystem down into some sort of sub-structures and pick and choose the parts you want to use in each product. I think it makes sense to have three kinds of overlapping structures: libraries, layers and services. Libraries are the atoms of sharing and since you use Maven, one library corresponds to a single artifact. A library will build on functionality provided by other libraries, and to manage this, they should be organised into layers, where libraries in one layer are more general than libraries in layers above. A lot of the time, you’ll want to have a coarser structure than libraries, for both code management, architectural and operational reasons, and that’s when you create a service that provides some sort of function that will be used in the top-level products.

I’ve found that a lot of the time, code sharing isn’t planned, but emerges. You start with one product, and then there is an opportunity to create a new product that is similar to the first, so you build it on parts of the first. This means that you normally haven’t got a carefully planned set of libraries with well-defined and thought out dependencies between each other and can give rise to some problems:

- Over-large and incoherent libraries. Typical indications of this are a high rate of change and associated high frequency of conflicting changes, forced inclusion of code that you don’t really need into certain builds because stuff you need is packaged with unrelated and irrelevant other code, and difficulties figuring out what dependencies you should have and where to add code for some new feature.

- Shared libraries that contain code that isn’t really a good candidate for sharing. Trying to include that code in different products typically leads to a snarled code in the library with lots of specific conditions or APIs that haven’t made a decision on what their clients actually should use them for. Sometimes, the library will contain some code that is perfect for sharing, and some that definitely isn’t.

- A poor dependency structure: a library that is perfect for sharing might have a dependency on one that you would prefer to leave as product-specific, or there might be circular dependencies between libraries.

Once a library structure is in place and used by multiple teams, it is hard to change because a) it involves making many backwards-incompatible changes, b) it is work that is boring and difficult for developers, c) it gives no short-term value for business owners, and d) it requires cross-team schedule coordination. However, it’s one of those things where the longer you leave it, the higher the accumulated costs in terms of confusion and lack of synergies, so if you’re reasonably sure that the code in question will live for a long time, it’s is likely to eventually be a worthwhile investment to make. It is possible to do at least some parts of a library restructuring incrementally, although there are in my experiences some cases where you’ll bring a few people to a total standstill for a month or so while cleaning up some particular mess. That sort of situation is of course particularly hard to get resolved. It requires discipline, coordination, and above all, a clear understanding of the reasons for making the change – those reasons cannot be as simple as ‘the code needs to be clean’, there should be a cost-benefit analysis of some kind if you want to be really professional about it. If you invest a man-month in cleaning up your library structure, how long will it take before you’ve recouped that man-month?

Given that it is possible to go wrong with your shared library structure, what are the characteristics of a library structure that has gone right? Here’s a couple more bullet points and a diagram:

- Libraries are coherent and of the right size. There are some opposing forces that affect what is “the right library size”: smaller libraries are awkward to work with from a source management, information management and documentation perspective since the smaller the libraries are, the more of them you need. This means you’ll have more or more complicated IDE projects and build files, and you’ll have a larger set of things to search in order to find out where a particular feature is implemented. On the other hand, larger libraries suffer from a lack of purpose and coherence, which makes conflicts between teams sharing them more likely, increases their rate of change, and makes it harder to describe what they exist to do. All these things make them less suitable for sharing. I think you want to have libraries that are as fine-grained as you can make them, without making it too hard to get an overview over which libraries are available, what they are used for and where to find the code you want to make a change to.

- There’s a clear definition of what type of information and logic goes where in the layered structure. At the bottom, you’ll find super-generic things like logging and monitoring code. Slightly higher up, you’ll typically have things that are very central to the business: normally anything that relates to money or customers, where you’ll want to ensure that all products work the same way. Further upwards, you can find things that are shared within a given product category, and if you have higher level libraries, they are quite likely to be product-specific, so maybe not candidates for sharing at all.

- In addition to the horizontal structure defined by the layers, there is a coarse-grained vertical structure provided by services. Services group together related functions and are usually primarily introduced for operational reasons – for instance, the need to scale a certain set of features independently of some other set. But they also add to architectural clarity in that they provide a simpler view on their function set, allowing products to share implementations of certain features without being linked using the same code. Services also simplify code sharing in the way that they provide isolation: you can have separate teams develop services and clients in parallel as long as you have a sufficiently well-defined service API.

Structuring your code in a way that is conducive to sharing is a good thing, but it is also hard. I particularly struggle with the fact that it is very hard to be agile about it: you can’t easily “inspect and adapt”, because of the difficulty of changing a library structure once it is in place. The best opportunity to put a good structure in place is when you start sharing some code between two products, but at that time it is very hard to foresee future developments (how is product number three going to be similar to or different from products 1 and 2?). Defining a coarse layered structure based on expected ‘genericness’ and making libraries small enough to be coherent is probably the best way to get the structure approximately right.

Gradual Refactoring

Posted in Code Management on May 19, 2010

We’re right now in the middle of something that feels a little like an unplanned experiment in code management. Unplanned in the sense that I didn’t expect us to work the way we do, not so much in the sense that I think it is particularly risky. It started not quite a year ago with my colleague Mateusz suggesting that we should dedicate a fixed percentage of the story points in each sprint to what he called maintenance or technical stories. That soon crystallised into a backlog owned by me, where we schedule stories that are aimed at somehow improving our productivity as opposed to our product. So Paul, the ‘normal’ product owner, is in charge of the main backlog that deals with improving the products, and I am the owner of the maintenance backlog, where we improve the efficiency of how we work by improving processes, tools and removing technical debt. We’re aiming at spending about 15% of our time on technical backlog stories, and we more or less do.

Typical examples of stories that have gone onto our technical backlog are:

- A tool that allows QA to specify that outgoing service calls matching certain regular expressions should return mock data specified in a file rather actually calling the service. This makes us a lot more productive with regard to verifying site behaviour in certain hard-to-recreate and data-dependent cases.

- Improvements to our performance monitoring systems and tools that make it easier for us to figure out where we have performance problems when we do.

- Auditing and optimising the QA server allocation in order to speed up especially our automated test scripts.

- Various refactoring stories that clean up code where functional evolution has led to the original design no longer being suitable.

That has worked out pretty much as expected: we’ve gained benefits from the productivity improvements and we continue to spend 5-6 times more effort on money-making product improvements than on engineering driven platform-building.

The unexpected thing that has happened is that we’re heading towards a situation where we have different design generations that solve similar problems. As an example, the original pattern we used to create Spring MVC controllers has broken down, so we’ve come up with a new one that is better though not yet perfect. In order to have stories small enough to complete within one iteration, we’ve had to apply this pattern on a controller-by-controller basis – each refactoring has taken about 2 weeks of calendar time so far, except the first one which took about 4, so the effort isn’t trivial. There are nearly 40 controller implementations in our site code and about 95% of the incoming traffic is handled by 4 of those. We’re now at a stage where we’ve refactored three of those four controllers. Given that the other 35 or so controllers a) don’t serve a lot of traffic, so don’t have a lot of business value and b) don’t get changed a lot because the functions they provide aren’t ones that we need to innovate in, I don’t feel like refactoring all of them. In fact, the next refactoring story in the backlog is aimed at a different area in the site, where a repeated pattern has broken down in a similar way.

My initial gut reaction was that we should apply any new pattern for a commonly occurring situation across the board, then tackle the next similar situation. That keeps the code clean and makes it easy to find your way around. But the point of refactoring is that it must be an investment that you can recoup, and if we haven’t spent more than 4 hours working on a particular controller in the last year, what are the chances of ever recouping an investment of a man-week? We would probably have to keep using the same controller for at least 20 years, assuming the refactoring made us twice as productive, and that doesn’t seem likely to happen. Given that, it seems like the best option is to focus refactoring efforts where they give return on investment, which is those parts of the code that you do most of your work in.

I think we’re more or less permanently stuck with two different generations of controller patterns. But it will be interesting to see what will happen over the next year or two – can this super-pattern of having annual growth rings of standardised solution patterns handle more than two generations? I’m not sure, but I believe it can.

Immutability: a constraint that empowers

Posted in Java, Software Development on May 4, 2010

In Effective Java (Second Edition, item 15), Josh Bloch mentions that he is of the opinion that Java programmers should have the default mindset that their objects should be immutable, and that mutability should be used only when strictly necessary. The reason for this post is it seems to me that most Java code I read doesn’t follow this advice, and I have come to the conclusion that immutability is an amazing concept. My theory is that one of the reasons why immutability isn’t as widely used as it should be is that as programmers or architects, we usually want to open up possibilities: a flexible architecture allows for future growth and changes and gives as much freedom as possible to solve coding problems in the most suitable way. Immutability seems to go against that desire for flexibility in that it takes an option away: if your architecture involves immutable objects, you’ve reduced your freedom because those objects can’t change any longer. How can that be good?

The two main motivations for using immutability according to Effective Java is that a) it is easier to share immutable objects between threads and b) immutable objects are fundamentally simple because they cannot change states once instantiated. The former seems to be the most commonly repeated argument in favour of using immutable objects – I think probably because it is far more concrete: make objects immutable and they can easily be shared by threads. The latter, that the immutable objects are fundamentally simple, is the more powerful (or at least more ubiquitous) reason in my opinion, but the argument is harder to make. After all, there’s nothing you can do with an immutable object that you cannot also do with a mutable one, so why constrain your freedom? I hope to illustrate a couple of ways that you both open up macro-level architectural options and improve your code on the micro level by removing your ability to modify things.

Macro Level: Distributed Systems

First, I would like to spend some time on the concurrency argument and look at it from a more general perspective than just Java. Let’s take something that is familiar to anybody who has worked on a distributed system: caching. A cache is a (more) local copy of something where the master copy is for some reason expensive to fetch. Caching is extremely powerful and it is used literally everywhere in computing. The fact that the item in the cache could be different to the master copy, due to either one of them having changed, leads to a lot of problems that need solving. So there are algorithms like read-through, refresh-ahead, write-through, write-behind, and so on, that deal with the problem that the data in your cache or caches may be outdated or in a ‘future state’ when compared to the master copy. These problems are well-studied and solved, but even so, having to deal with stale data in caches adds complexity to any system that has to deal with it. With immutable objects, these problems just vanish. If you have a copy of a certain object, it is guaranteed to be the current one, because the object never changes. That is extremely powerful in designing a distributed architecture.

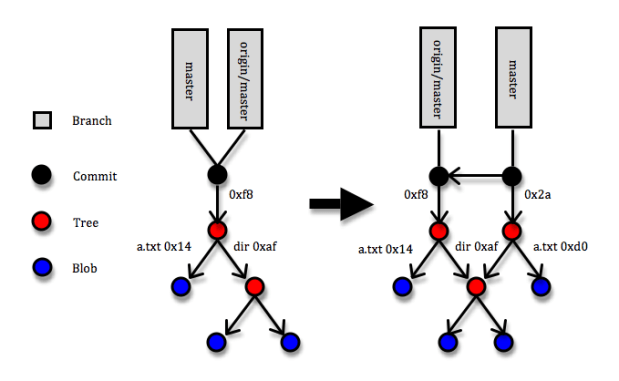

I wonder if I can write a blog post that doesn’t mention Git – apparently not this one at least :). Git is a marvellous example of a distributed architecture whose power comes to a large degree from reducing mutability. Most of the entities in Git are immutable: commits, trees and blobs and all of them are referenced via keys that are SHA-1 hashes of their contents. If a blob (a file) changes, a new entity in Git is created with a new hash code. If a tree (a directory – essentially a list of hash codes and names that identify trees or blobs that live underneath it) changes through a file being added, removed or modified, a new tree entity with new contents and a new hash code is created. And whenever some changes are committed to a Git repository, a commit entity (with a reference to the new top tree entity and some metadata about who and when made the commit, a pointer to the previous commit/s, etc) that describes the change set is created. Since the reference to each object is uniquely defined by its content, two identical objects that are created separately will always have the same key. (If you want to learn more about Git internals, here is a great though not free explanation.) So what makes that brilliant? The answer is that the immutability of objects means your copy is guaranteed to be correct and SHA-1 object references means that identical objects are guaranteed to have identical references and speeds up identity checks – since collisions are extremely unlikely, identical references is the same as objects being identical. Suppose somebody makes a commit that includes a blob (file) with hash code 14 and you fetch the commit to your local Git repository, and suppose further that a blob with hash code 14 is already in your repository. When Git fetches the commit, it’ll come to the tree that references blob 14 and simply note that “I’ve already got blob 14, so no need to fetch it”.

The diagram above shows a simplified picture of a Git repository where a commit is added. The root of the repository is a folder with a file called ‘a.txt’ and a directory called ‘dir’. Under the directory there’s a couple more files. In the commit, the a.txt file is changed. This means that three new entities are created: the new version of a.txt (hash code 0xd0), the new version of the root directory (hash code 0x2a), and the commit itself. Note that the new root directory still points to the old version of the ‘dir’ directory and that none of the objects that describe the previous version of the root directory are affect in any way.

That Git uses immutable objects in combination with references derivable from the content of objects is what opens up possibilities for being very efficient in terms of networking (only transferring necessary objects), local space storage (storing identical objects only once), comparisons and merges (when you reach a point where two trees point to the same object, you stop), etc., etc. Note that this usage of immutable objects is quite different from the one you read about in books like Effective Java and Java Concurrency in Practice – in those books, you can easily get the impression that immutability is something that requires taking some pretty special measures with regard to how you specify your classes, inheritance and constructors. I’d say that is only a part of the truth: if you do take those special measures in your Java code, you’ll get the benefit of some specific guarantees made by the Java Memory Model that will ensure you can safely share your immutable objects between threads inside a JVM. But even without solid guarantees about immutability like the JVM can provide, using immutability can provide huge architectural gains. So while Git doesn’t have any means of enforcing strict immutability – I can just edit the contents of something stored under .git/objects, with probable chaos ensuing – utilising it opens up for a very powerful architecture.

When you’re writing multi-threaded Java programs, use the strong guarantees you can get from defining your Java classes correctly, but don’t be afraid to make immutability a core architectural idea even if there is no way to actually formally enforce it.

Micro Level: Building Blocks

I guess the clearest point I can make about immutability, like most of the others who have written about it, is still one about concurrent execution. But I am a believer also in the second point about the simplicity that immutability gives you even if your system isn’t distributed. For me, the essence of that point is that you can disregard any immutable object as a source of weirdness when you’re trying to figure out how the code in front of you is working. Once you have confirmed to yourself that an immutable object is being instantiated correctly, it is guaranteed to remain correct for the rest of the logical flow you’re looking at. You can filter out that object and focus your valuable brainpower on other things. As a former chess player, I see a strong analogy: once you’ve played a bit, you stop seeing three pawns, a rook and a king, you see a king-side castle formation. This is a single unit that you know how it interacts with the rest of the pieces on the board. This allows you to simplify your mental model of the board, reducing clutter and giving you a clearer picture of the essentials. A mutable object resists simplification, so you’ll constantly need to be alert to something that might change it and affect the logic.

you’ve played a bit, you stop seeing three pawns, a rook and a king, you see a king-side castle formation. This is a single unit that you know how it interacts with the rest of the pieces on the board. This allows you to simplify your mental model of the board, reducing clutter and giving you a clearer picture of the essentials. A mutable object resists simplification, so you’ll constantly need to be alert to something that might change it and affect the logic.

On top of the simplicity that the classes themselves gain, by making them immutable, you can communicate your intent as a designer clearly: “this object is read-only, and if you believe you want to change it in order to make some modification to the functionality of this code, you’ve either not yet understood how the design fits together or requirements have changed to such an extent that the design doesn’t support them any longer”. Immutable objects make for extremely solid and easily understood building blocks and give the readers clear signals about their intended usage.

When immutability doesn’t cut the mustard

There are very few things that are all beneficial, and this is of course the case with immutability. So what are the problems you run into with immutability? Well, first of all, no system is interesting without mutable state, so you’ll need at least some objects that are mutable – in Git, branches are a prime example of mutable object, and hence also the one source of conflicts between different repositories. A branch is simply a pointer to the latest commit on that branch, and when you create new commits on a branch, the pointer is updated. In the diagram above, that is reflected by the ‘master’ label having moved to point to the latest commit, but the local copy of where ‘origin/master’ is pointing is unchanged and will remain so until the next push or fetch happens. In the mean time, the actual branch on the origin repository may have changed, so Git needs to handle possible conflicts there. So the branches suffer from the normal cache-related staleness and concurrent update problems, but thanks to most entities being immutable, these problems are confined to a small section of the system.

Another thing you’ll need, which is provided for free by a JVM but not in systems in general, is garbage collection. You’ll keep creating new objects instead of changing the existing ones, and for most kinds of systems, that means you’ll want to get rid of the outdated stuff. Most of the times, that stuff will simply sit on some disk somewhere, and disk space is cheap which means you don’t need to get super-sophisticated with your garbage collection algorithms. In general, the memory allocation and garbage collection overhead that you incur from creating new objects instead of updating existing ones is much less of a problem than it seems at first glance. I’ve personally run into one case where it was clearly impossible to make objects immutable: in Ardor3D, an open-source Java 3D engine, where the most commonly used class is Vector3, a vector of three doubles. This feels like it is a great candidate for being immutable since it is a typical small, simple value object. However, the main task of a 3D engine is to update many thousands of positions of things at least 50 times per second, so in this case, immutability would be completely impossible. Our solution there was to signal ‘immutability intent’ by having the Vector3 class (and some similar classes) implement a ReadOnlyVector3 interface and use that interface when some class needed a vector but wasn’t planning on modifying it.

There’s a lot more solid but semi-concrete advice about why immutability is useful outside of the context of sharing state between threads in Effective Java, so if you’re not convinced about it yet, I would urge you to re-read item 15. Or maybe the whole book, since everything Josh Bloch says is fantastic. To me, the use of immutability in Git shows that removing some aspects of freedom can unlock opportunities in other parts of the system. I also think that you should do everything you can do that will make your code easier for the reader to understand. Reducing mutability can have a game-changing impact on the macro level of your system, and it is guaranteed to help remove mental clutter and thereby improve your code on the micro level.

Code sharing: Use Maven

Posted in Code Management on May 1, 2010

Maven’s slow progress towards becoming the most accepted Java build tool seems to continue, although a lot of people are still annoyed enough with its numerous warts to prefer Ant or something else. My personal opinion is that Maven is the best build solution for Java programs that is out there, and as somebody said – I’ve been trying to find the quote, but I can’t seem to locate it – when an Ant build is complicated, you blame yourself for writing a bad build.xml, but when it is hard to get Maven to behave, you blame Maven. With Ant, you program it, so any problems are clearly due to a poorly structured program. With Maven you don’t tell it how to do things, you try to tell it what should be done, so any problems feel like the fault of the tool. The thing is, though, that Maven tries to take much more responsibility for some of the issues that lead to complex build scripts than something like Ant does.

I’ve certainly spent a lot of time cursing poorly written build scripts for Ant and other tools, and I’ve also spent a lot of time cursing Maven when it doesn’t do what I want it to. But the latter time is decreasing as Maven keeps improving as a build tool. There’s been lots of attempts to create other tools that are supposed to make builds easier than Maven, but from what I have seen, nothing has yet really succeeded to provide a clearly better option (I’ve looked at Buildr and Raven, for instance). I think the truth is simply that the build process for a large system is a complex problem to solve, so one cannot expect it to be free of hassles. Maven is the best tool out there for the moment, but will surely be replaced by something better at some point.

So, using Maven isn’t going to be problem-free. But it can help with a lot of things, particularly in the context of sharing code between multiple teams. The obvious thing it helps with is the single benefit that most people agree that Maven has – its way of managing dependencies and the massive repository infrastructure and dependency database that is just available out there. On top of that, building Maven projects in Hudson is dead easy, and there’s a whole slew of really nice tools that come with Maven plugins that you can use that enable you to get all kinds of reports and metadata about your code. My current favourite is Sonar, which is great if you want to keep track of how your code base evolves from some kind of aggregated perspective.

Here are some things you’ll want to do if you decide to use Maven for the various projects that make up your system:

- Use Nexus as an internal repository for build artifacts.

- Use the Maven Release plugin to create releases of internal artifacts.

- Create a shared POM for the whole code base where you can define shared settings for your builds.

The word ‘repository’ is a little overloaded in Maven, so it may be confusing. Here’s a diagram that explains the concept and shows some of the things that a repository manager like Nexus can help you with:

The setup includes a Git server (because you use Git) for source control, a Hudson server (or set of) that does continuous integration, a Nexus-managed artifact repository and a developer machine. The Nexus server has three repositories in it: internal releases, internal snapshots and a cache of external repositories. The latter is only there as a performance improvement. The other two are the way that you distribute Maven artifacts within your organisation. When a Maven build runs on the Hudson or developer machines, Maven will use artifacts from the local repository on the machine – by default located in a folder under the user’s home directory. If a released version of an artifact isn’t present in the local repository, it will be downloaded from Nexus, and snapshot versions will periodically be refreshed, even if present locally. In the example setup, new snapshots are typically deployed to the Nexus repository by the Hudson server, and released versions are typically deployed by the developer producing the release. Note that both Hudson and developers are likely to install snapshots to the local repository.

The setup includes a Git server (because you use Git) for source control, a Hudson server (or set of) that does continuous integration, a Nexus-managed artifact repository and a developer machine. The Nexus server has three repositories in it: internal releases, internal snapshots and a cache of external repositories. The latter is only there as a performance improvement. The other two are the way that you distribute Maven artifacts within your organisation. When a Maven build runs on the Hudson or developer machines, Maven will use artifacts from the local repository on the machine – by default located in a folder under the user’s home directory. If a released version of an artifact isn’t present in the local repository, it will be downloaded from Nexus, and snapshot versions will periodically be refreshed, even if present locally. In the example setup, new snapshots are typically deployed to the Nexus repository by the Hudson server, and released versions are typically deployed by the developer producing the release. Note that both Hudson and developers are likely to install snapshots to the local repository.

I’ve tried a couple of other repository managers (Archiva, Artifactory and Maven-Proxy), but Nexus has been by a pretty wide margin the best – robust, easy to use and easy to understand. It’s been a year or two since I looked at the other ones, so they may have improved since.

Having an internal repository opens up for code sharing by providing a uniform mechanism for distributing updated versions of internal libraries using the standard Maven deploy command. Maven has two types of artifact versions: releases and snapshots. Releases are assumed to be immutable and snapshots mutable, so updating a snapshot in the internal repository will affect any build that downloads the updated snapshot, whereas releases are supposed to be deployed to the internal repository once only – any subsequent deployments should deploy something that is identical. Snapshots are tricky, especially when branching. If you create two branches of the same library and fail to ensure that the two branches have different snapshot versions, the two branches will interfere,

There is interference between the two branches because they both create updates to the same artifact in the Maven repositories. Depending on the ordering of these updates, builds may succeed or fail seemingly at random. At Shopzilla, we typically solve this problem in two ways: for some shared projects, where we have long-lived/permanent team-specific branches, the team name is included in the version number of the artifact, and for short-lived user story branches, the story ID is included in the version number. So if I need to create a branch off of version 2.3-SNAPSHOT for story S3765, I’ll typically label the branch S3765 and change the version of the Maven artifact to 2.3-S3765-SNAPSHOT. The Maven release plugin has a command that simplifies branching, but for whatever reason, I never seem to use it. Either way, being careful about managing branches and Maven versions is necessary.

A situation where I do use the maven release plugin a lot is when making releases of shared libraries. I advocate a workflow where you make a new release of your top-level project every time you make a live update, and because you want to make live updates frequently and you use scrum, that means a new Maven release with every iteration. To make a Maven release of a project, you have to eliminate all snapshot dependencies – this is a necessary requirement for immutability – so releasing the top level project means make release versions of all its updated dependencies. Doing this frequently reduces the risk of interference between teams by shortening the ‘checkout, modify, checkin’ cycle.

See the pom file example below for some hands-on pom.xml settings that are needed to enable using the release plugin.

The final tip for code sharing using Maven that I wanted to give is to use a shared parent POM that contains settings that should be shared between projects. The main reason is of course to reduce code duplication – any build file is code, of course, and Maven build files are not as easy to understand as one would like, so simplifying them is very valuable. Here’s some stuff that I think should go into a shared pom.xml file:

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.mycompany</groupId>

<artifactId>shared-pom</artifactId>

<name>Company Shared pom</name>

<version>1.0-SNAPSHOT</version>

<packaging>pom</packaging>

<!--

One of the things that is necessary in order to be able to use the

release plugin is to specify the scm/developerConnection element.

I usually also specify the plain connection, although

I think that is only used for generating project

documentation, a Maven feature I don't find particularly useful

personally.

A section like this needs to be present in every project for which

you want to be able to use the release plugin, with the project-

specific Git URL.

-->

<scm>

<connection>scm:git:git://GITHOST/GITPROJECT</connection>

<developerConnection>scm:git:git://GITHOST/GITPROJECT</developerConnection>

</scm>

<build>

<!--

Use the plugins section to define Maven plugin configurations that

you want to share between all projects.

-->

<plugins>

<!--

Compiler settings that are typically going to be identical in all

projects. With a name like Måhlén, you get particularly sensitive

to using the only useful character encoding there is.. ;)

-->

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>1.6</source>

<target>1.6</target>

<encoding>UTF-8</encoding>

</configuration>

</plugin>

<!--

Tell Maven to create a source bundle artifact during the package

phase. This is extremely useful when sharing code, as the act of

sharing means you'll want to create a relatively large number of

smallish artifacts, so creating IDE projects that refer directly

to the source code is unmanageable. But the Maven integration of

a good IDE will fetch the Maven source bundle if available, so if

you navigate to a class that is included via Maven from your

top-level project, you'll still see the source version - and even

the right source version, because you'll get what corresponds

to the binary that has been linked.

-->

<plugin>

<artifactId>maven-source-plugin</artifactId>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>jar</goal>

</goals>

</execution>

</executions>

</plugin>

<!--

Ensure that a javadoc jar is being generated and deployed. This

is useful for similar reasons as source bundle generation,

although to a lesser degree in my opinion. Javadoc is great, but

the source is always up to date.

-->

<plugin>

<artifactId>maven-javadoc-plugin</artifactId>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>jar</goal>

</goals>

</execution>

</executions>

</plugin>

<!--

The below configuration information was necessary to ensure that

you can use the maven release plugin with Git as a version control

system. The exact version numbers that you want to use are likely

to have changed since then, and it may even be that Git support is

more closely integrated nowadays, so less explicit configuration

is needed - I haven't tested that since maybe March 2009.

-->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-release-plugin</artifactId>

<dependencies>

<dependency>

<groupId>org.apache.maven.scm</groupId>

<artifactId>maven-scm-provider-gitexe</artifactId>

<version>1.1</version>

</dependency>

<dependency>

<groupId>org.codehaus.plexus</groupId>

<artifactId>plexus-utils</artifactId>

<version>1.5.7</version>

</dependency>

</dependencies>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-scm-plugin</artifactId>

<version>1.1</version>

<dependencies>

<dependency>

<groupId>org.apache.maven.scm</groupId>

<artifactId>maven-scm-provider-gitexe</artifactId>

<version>1.1</version>

</dependency>

<dependency>

<groupId>org.codehaus.plexus</groupId>

<artifactId>plexus-utils</artifactId>

<version>1.5.7</version>

</dependency>

</dependencies>

</plugin>

</plugins>

</build>

<!--

Configuration of internal repositories so that the sub-projects

know where to download internally created artifacts from. Note

that due to a bootstrapping issue, this configuration needs to

be duplicated in individual projects. This file, the shared POM,

is available from the Nexus repo, but if the project POM doesn't

contain the repo config, the project build won't know where to

download the shared POM.

-->

<repositories>

<!-- internal Nexus repository for released artifacts -->

<repository>

<id>internal-releases</id>

<url>http://NEXUSHOST/nexus/content/repositories/internal-releases</url>

<releases><enabled>true</enabled></releases>

<snapshots><enabled>false</enabled></snapshots>

</repository>

<!-- internal Nexus repository for SNAPSHOT artifacts -->

<repository>

<id>internal-snapshots</id>

<url>http://NEXUSHOST/nexus/content/repositories/internal-snapshots</url>

<releases><enabled>false</enabled></releases>

<snapshots><enabled>true</enabled></snapshots>

</repository>

<!--

Nexus repository cache for third party repositories such as

ibiblio. This is not necessary, but is likely to be a

performance improvement for your builds.

-->

<repository>

<id>3rd party</id>